AI is not just transforming compute; it is also rewriting the thermal limits of data centers.

As workloads shift from CPUs to high-performance GPUs, the amount of heat generated inside racks is rising dramatically. Training large AI models and running continuous inference require dense clusters of accelerators operating at full capacity, pushing power consumption and heat output to levels traditional infrastructure was never designed to handle.

What was once a manageable engineering challenge is quickly becoming a bottleneck.

Data centers that previously operated at modest rack densities are now facing 50-100kW or more per rack, creating concentrated heat zones that standard air-cooling systems struggle to dissipate efficiently. The result is not just higher temperatures but an increased risk of performance throttling, hardware stress, and operational inefficiencies.

This has led to what many in the industry are beginning to describe as a cooling crisis.

But the challenge goes beyond simply removing heat. It’s about rethinking how data centers are designed, powered, and operated in an era where thermal density is scaling faster than infrastructure can adapt.

The question is no longer whether cooling needs to evolve.

It’s whether it can keep pace with AI’s relentless growth.

How Is AI Redefining Thermal Density?

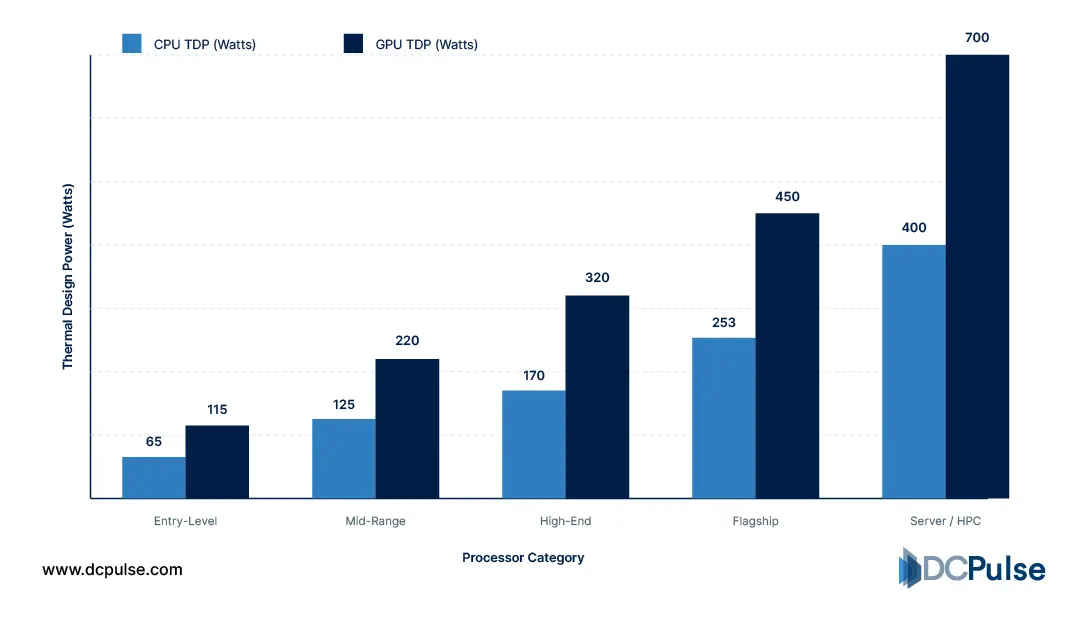

The shift to AI workloads is fundamentally changing how heat is generated inside data centers. Unlike traditional CPU-based systems, modern AI infrastructure relies heavily on GPUs, which operate at significantly higher power levels and generate far more heat per unit.

Research from the Uptime Institute shows that GPU power levels are rising rapidly, with some accelerators exceeding 1kW per chip, driving a sharp increase in thermal output.

CPU vs. GPU Thermal Output (TDP in Watts)

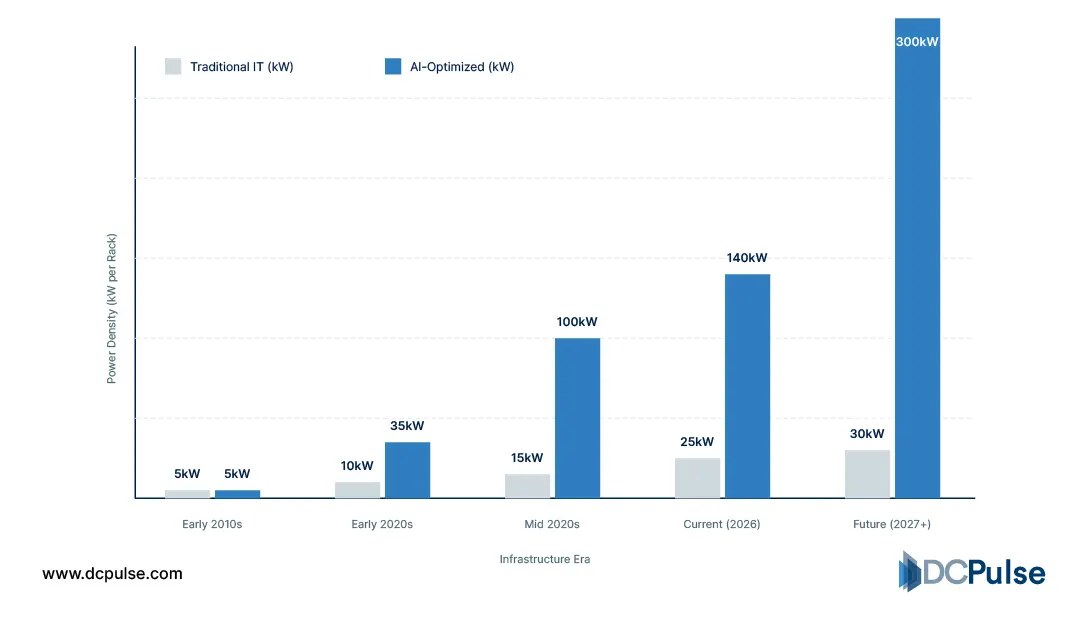

This shift is directly impacting rack density. While traditional racks have historically operated around 4-10kW, AI-driven deployments are now pushing well beyond that. High-density AI systems today commonly exceed 40kW per rack, with some advanced configurations already moving toward 100kW and beyond.

Rack Density Evolution - Traditional vs. AI (kW per Rack)

The reason is architectural. AI workloads require tightly coupled GPU clusters to maximize performance, concentrating compute and, therefore, heat into smaller physical footprints. This creates intense, localized thermal zones that are far more difficult to cool.

At the same time, traditional air-cooling systems are reaching their limits. Moving enough air to dissipate this level of heat becomes increasingly inefficient, especially as densities rise.

The result is a clear inflection point: data centers are no longer dealing with distributed, moderate heat loads; they are facing extreme, concentrated thermal density driven by AI infrastructure.

Liquid Takes Over: The New Era of Data Center Cooling

As AI workloads push thermal density to extreme levels, the industry is rapidly shifting toward liquid-based cooling technologies as a necessity, not an option.

Recent industry analysis shows that liquid cooling is moving from niche adoption to a core requirement for AI infrastructure, as traditional air systems can no longer handle rising heat loads.

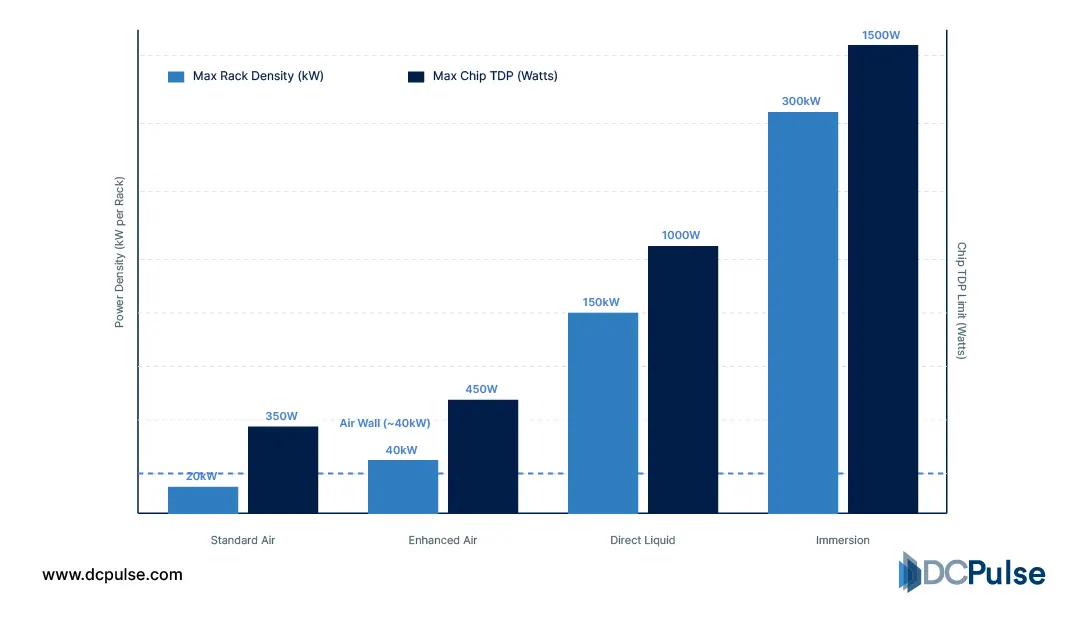

Air Cooling Limits vs. Liquid Cooling Capability (2026)

The most widely adopted approach today is direct-to-chip liquid cooling, where coolant is delivered directly to processors. This method removes heat at the source, enabling systems to operate at higher performance without thermal throttling.

At the same time, immersion cooling is gaining traction for ultra-high-density environments. In this approach, servers are submerged in a dielectric liquid, allowing for significantly higher efficiency and density compared to air-based systems.

Real-world momentum is already visible. Companies are investing heavily in liquid cooling technologies, with deal activity and partnerships accelerating as AI demand rises.

Even at the chip level, innovation is accelerating. New cooling techniques, such as microfluidic channels embedded directly into processors, are being developed to handle extreme thermal loads more efficiently than traditional methods.

The direction is clear: cooling is no longer a supporting system. It is becoming a primary constraint and innovation frontier, driven by the thermal demands of AI infrastructure.

Who’s Adapting Fastest?

The shift toward advanced cooling is no longer theoretical; major industry players are actively redesigning infrastructure to handle AI-driven thermal density.

Hyperscalers are leading this transition. Companies like Microsoft and Google are increasingly deploying liquid cooling systems in their data centers to support high-density AI workloads. Industry reporting indicates that traditional air-cooled designs are being replaced or supplemented with liquid-based solutions as GPU clusters scale.

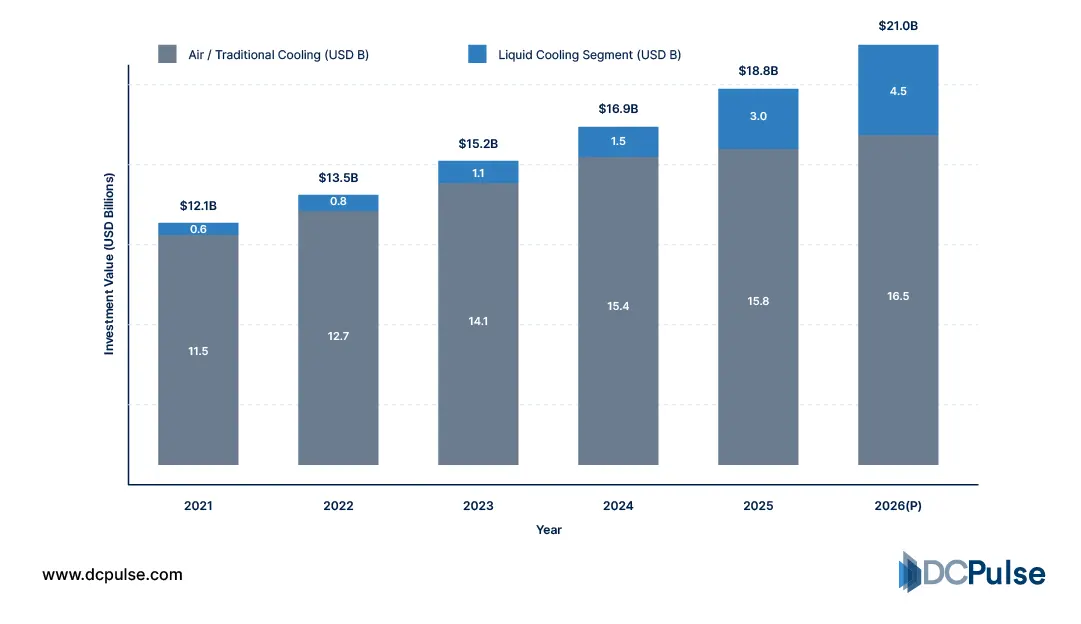

Recent developments also highlight how quickly this shift is accelerating. A KKR-backed data center cooling firm has drawn multibillion-dollar interest, reflecting growing investor confidence in cooling infrastructure as a critical component of AI growth.

Global Data Center Cooling Infrastructure Investment (2021-2026)

Hardware vendors are also adapting. Companies are redesigning servers and racks specifically for liquid cooling compatibility, moving away from legacy air-cooled form factors. This includes support for higher power densities and integrated cooling pathways.

At the same time, chip-level innovation is pushing boundaries. New cooling approaches, such as embedded microfluidic systems, are being explored to manage heat directly within processors, signaling a shift toward cooling as part of chip design itself.

However, not all deployments are fully scaled. While hyperscalers are moving quickly, many implementations across the broader industry are still in pilot or early rollout stages.

The trend is clear: the fastest adopters are treating cooling not as an upgrade, but as a fundamental redesign requirement for AI infrastructure.

Can Cooling Keep Up with AI?

Cooling can keep up with AI, but only through a fundamental shift in how data centers are designed.

The technologies needed to handle rising thermal density already exist. Liquid cooling, hybrid architectures, and chip-level innovations are proving capable of managing increasingly powerful AI workloads. Hyperscalers are demonstrating that high-density infrastructure can operate efficiently when cooling is treated as a core design element rather than an afterthought.

However, scalability remains the key challenge. Retrofitting existing data centers is complex and costly, and not all facilities can support liquid cooling without significant redesign. At the same time, deploying new high-density infrastructure requires coordination across hardware, cooling systems, and facility design.

Cost is another factor. Advanced cooling solutions require higher upfront investment, even if they improve long-term efficiency. For many operators, especially outside hyperscale environments, adoption will be gradual.

The most realistic outcome is a split path. Leading operators will move quickly toward liquid and hybrid cooling, while the broader industry transitions more slowly.

Cooling will keep pace with AI, but not evenly. It will evolve fastest where demand is highest, making advanced cooling a defining feature of next-generation data centers rather than a universal standard.