The race to build data centers is no longer just about scale; it’s about speed.

Across global markets, timelines that once stretched 18 to 36 months are now colliding with demand that materializes in quarters, not years. AI workloads are accelerating capacity needs faster than utilities can provision power, land can be permitted, and traditional construction can deliver operational facilities. In this widening gap, a different approach is gaining traction.

Modular data centers, once limited to edge or temporary deployments, are now emerging as a serious strategy for rapid capacity expansion. Built off-site in controlled factory environments and delivered as near-ready units, they compress construction timelines into significantly shorter deployment cycles.

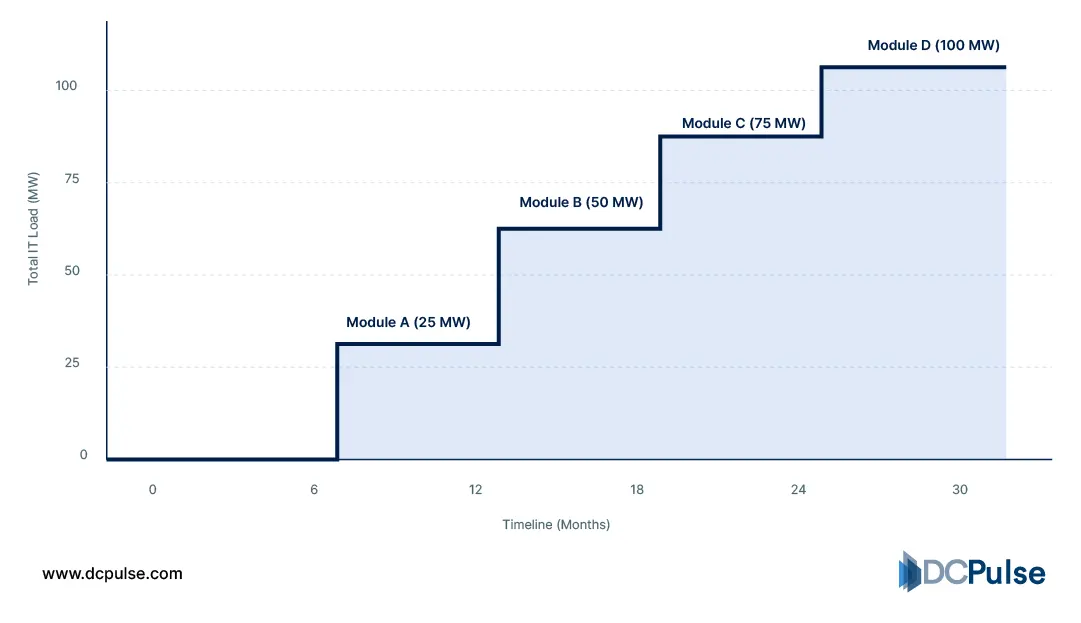

More importantly, they change how infrastructure scales. Instead of committing to large, upfront builds, operators can deploy capacity incrementally, aligning investment more closely with real demand.

But as power densities rise and AI workloads push infrastructure limits, one question becomes unavoidable:

Can modular data centers deliver both speed and performance at scale, or are they still a compromise?

From Containers to Capacity Strategy

Modular data centers are no longer defined by containers; they are defined by how efficiently they deliver deployable capacity.

At a structural level, modular systems are built using prefabricated, factory-integrated components that combine power, cooling, and IT into standardized units. A formal definition from a Schneider Electric white paper describes a prefabricated modular data center as “a pre-engineered, factory-integrated, and pre-tested assembly of subsystems… mounted on a skid or enclosure.”

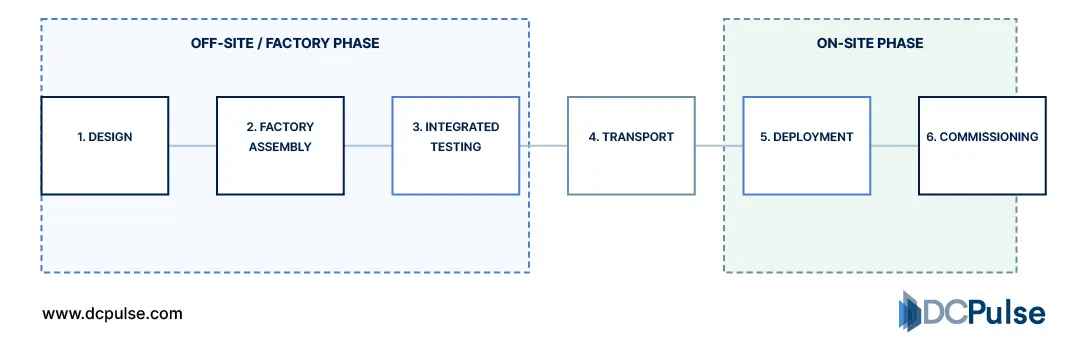

This factory-first model fundamentally shifts how data centers are built. Instead of assembling systems entirely on-site, modular components are manufactured, integrated, and tested off-site, then transported and deployed. Vertiv confirms that these systems are “built and assembled off-site” with integrated power and thermal infrastructure, significantly reducing on-site work.

Traditional vs. Modular Deployment Timeline (2026 Benchmarks)

Functionally, this architecture enables a shift toward modular scalability. Instead of constructing a single large facility, operators deploy capacity in discrete units that can be added, replaced, or upgraded over time. Even early architectural models and patents describe modular data centers as systems composed of self-contained modules, power, cooling, and compute, built in factories and assembled on-site.

Modular Capacity Expansion Model (Stepwise Deployment)

This also changes execution. Traditional builds follow sequential construction, while modular approaches allow parallel workflows, site preparation, and module fabrication happening simultaneously, reducing deployment timelines and improving predictability.

What has changed is not just the technology, but its role. Modular infrastructure is no longer an alternative; it is becoming a core method for planning and delivering data center capacity.

Engineering Speed Without Compromise

If modular data centers were once defined by speed, they are now being re-engineered to handle performance at scale.

The biggest shift is happening inside the module itself. Early modular designs were optimized for lower-density workloads, but modern systems are now being built for high-density AI environments, where power and thermal demands are significantly higher. Industry analysis shows that AI workloads are driving extreme requirements for power and cooling, pushing traditional data center designs beyond their limits.

One of the most critical developments is the integration of liquid cooling into modular architectures. As rack densities rise, often exceeding what air cooling can efficiently handle, liquid-based systems such as direct-to-chip cooling are becoming essential for maintaining performance and stability.

This shift is not theoretical; it is already reflected in emerging solutions. New modular platforms are being designed specifically for AI workloads, integrating liquid cooling, high-efficiency power systems, and modular assembly to support dense compute environments.

At the same time, prefabrication itself is evolving. Modular systems are increasingly treated as fully integrated, pre-engineered infrastructure blocks, combining compute, cooling, and power into single deployable units. This approach enables faster deployment while maintaining consistency and reliability across installations.

Modular Factory Integration & Pre-Testing Workflow (2026)

Another emerging shift is the alignment between modular infrastructure and energy constraints. As AI demand grows, power availability, not just compute, has become a primary bottleneck, influencing where and how data centers are deployed.

However, this evolution introduces a new tension: standardization vs customization. Modular systems depend on repeatable designs to maintain speed and efficiency, while AI workloads demand increasingly specialized infrastructure configurations.

What’s emerging is not just a faster way to build but a more advanced infrastructure model where density, cooling, and deployment speed are engineered together.

From Experimentation to Scaled Deployment

Modular data centers are no longer being tested; they are being actively deployed in real-world scenarios.

One clear example comes from Microsoft, which introduced its Azure Modular Datacenter (MDC) to deliver cloud capabilities in remote and challenging environments, including disaster response and mobile deployments. This reflects a shift toward deployable, self-contained infrastructure rather than fixed facilities.

Beyond hyperscalers, modular deployments are already being used across government, telecom, and industrial sectors. Real-world case studies show containerized modular systems deployed for national infrastructure, including traffic monitoring and public-sector digital platforms, with capacities ranging from tens to hundreds of kilowatts and built with redundancy (e.g., N+1 power and cooling).

At the same time, modular strategies are being driven by speed-to-deployment requirements. Typical modular projects, from design to commissioning, can be completed in a matter of months rather than years, particularly for pre-integrated containerized systems.

Modular Deployment Cycle: Compressed Timeline (2025-2026)

Another important shift is where these systems are being deployed. Emerging projects are exploring non-traditional locations, including dense urban environments. For example, a consortium in Tokyo is testing modular data centers under railway infrastructure to address land and power constraints, highlighting how modular systems enable deployment in spaces where traditional builds are not feasible.

What stands out is the intent shift. Modular data centers are no longer niche or temporary; they are being used as practical deployment tools across industries where speed, flexibility, and location constraints define infrastructure strategy.

Is Modular the New Default for Data Center Deployment?

Modular data centers have clearly moved beyond niche use cases, but they are not a universal replacement for traditional builds.

Their advantage lies in speed, flexibility, and phased deployment. For operators facing immediate capacity pressure, especially from AI workloads, modular infrastructure helps bridge the gap between demand and delivery. It enables faster rollout, better alignment with power availability, and reduced on-site complexity.

However, limitations remain. Large hyperscale campuses still benefit from economies of scale, deeper customization, and long-term optimization that modular systems struggle to match. As infrastructure scales into multi-gigawatt environments, traditional builds continue to offer advantages in integration and lifecycle efficiency.

What’s emerging is a hybrid model. Operators are combining core facilities with modular expansion layers, using prefabricated units to accelerate deployment while maintaining long-term scalability.

The takeaway is clear:

Modularity is not replacing traditional data centers; it is reshaping how capacity is delivered, introducing speed and flexibility into an industry defined by long build cycles.