The data center is no longer just a place to store and process data; it is becoming a production engine for artificial intelligence.

As AI workloads surge, hyperscale infrastructure is undergoing a fundamental transformation. Training large models, running continuous inference, and managing massive data pipelines now demand far more than traditional cloud architectures were designed to handle. The shift is visible in rising rack densities, GPU-dominated clusters, and networking systems built for constant, high-speed data exchange rather than simple request-response traffic. NVIDIA has described this transition as the move toward “AI factories,” where computing infrastructure operates more like an industrial system than a conventional data center.

In this model, data flows through tightly integrated pipelines, models are continuously trained and refined, and outputs are generated at scale, mirroring the efficiency and throughput of manufacturing processes. It is a shift from static infrastructure to dynamic, production-oriented systems.

But the concept raises an important question.

Are AI factories a genuinely new class of data center, purpose-built for the AI era?

Or are they simply the next phase of hyperscale evolution, wrapped in a new label?

The answer will define how future infrastructure is designed, deployed, and scaled.

What Defines an AI Data Center Today?

AI data centers today are defined by one dominant shift: extreme power density driven by GPU-based computing. Unlike traditional environments built around CPUs, modern AI infrastructure is designed around tightly packed accelerator clusters optimized for continuous training and inference workloads.

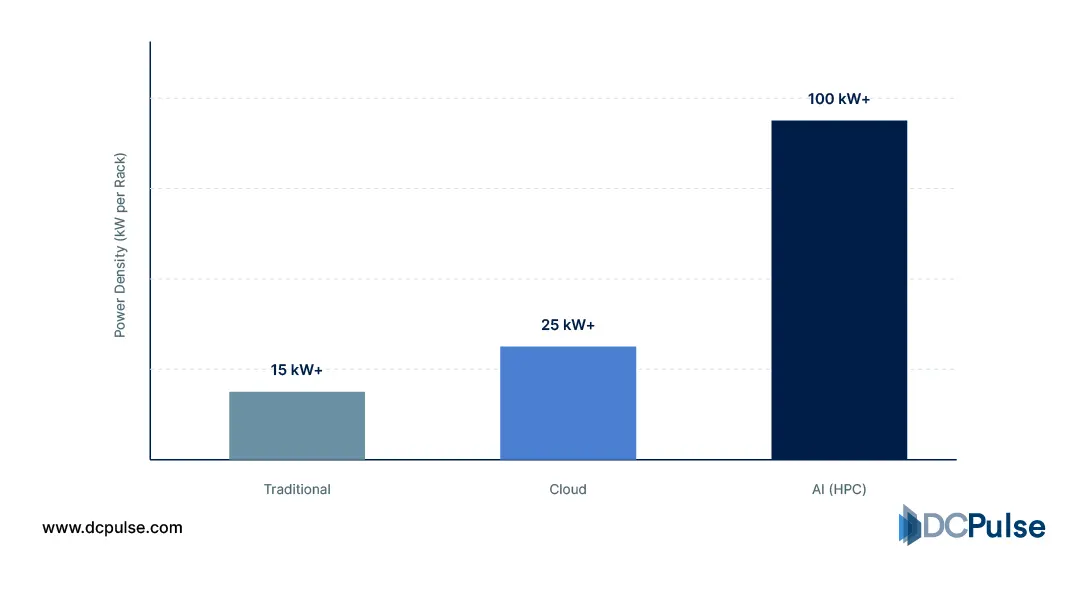

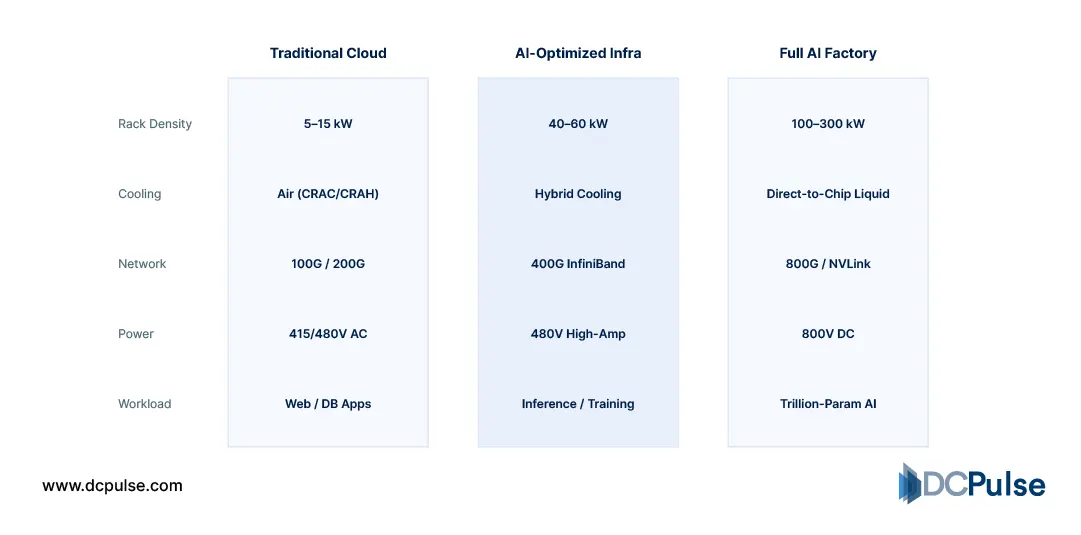

This shift is most visible at the rack level. Traditional data centers typically operated within 5-15kW per rack, but AI deployments are now pushing 50-100kW, with some environments already exceeding that range.

Data Center Rack Density Evolution (2020–2026)

Networking has also evolved to support AI workloads. Instead of north-south traffic patterns, AI clusters generate heavy east-west traffic, requiring high-bandwidth, low-latency interconnects to synchronize massive datasets and model parameters across nodes.

Power and cooling systems are under increasing strain as a result. Industry data shows that while most facilities still operate below 10kW per rack on average, AI workloads are forcing a rapid redesign of infrastructure to support high-density zones.

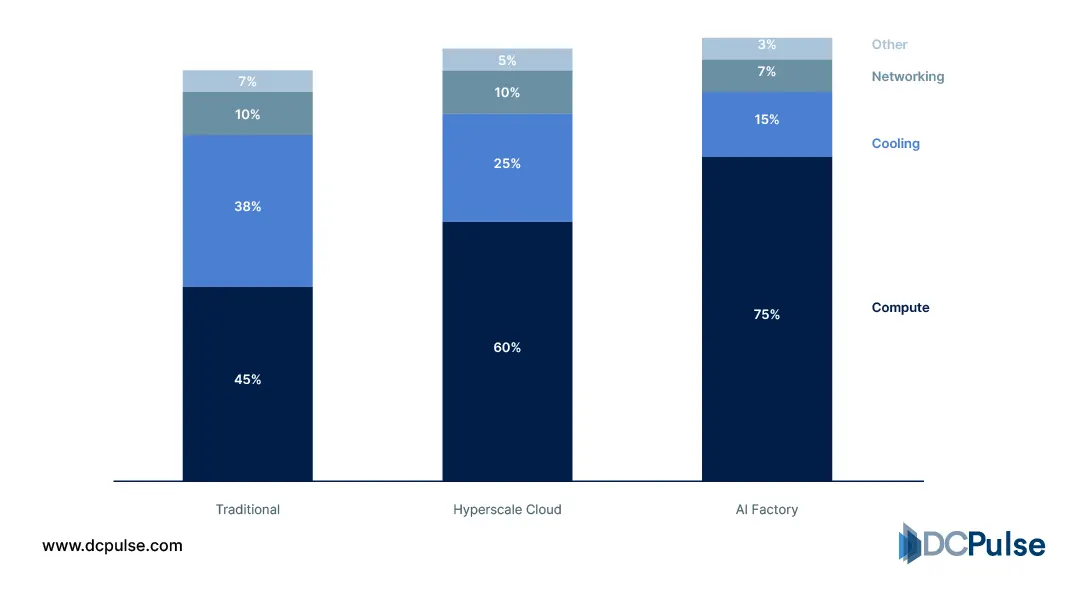

Data Center Power Distribution (2026 Profiles)

The outcome is clear: AI data centers are no longer general-purpose facilities. They are specialized, high-density systems engineered to handle sustained, compute-intensive workloads at scale.

Inside the AI Factory: What Makes It Different?

The term “AI factory” describes more than marketing; it captures a fundamental architectural shift in how data centers are designed and operated for AI workloads. Unlike traditional facilities built for discrete tasks, AI factories are optimized for continuous, high-throughput AI processing, a production-oriented model where compute, data movement, and orchestration are tightly integrated.

At the hardware layer, AI factories use specialized infrastructure tuned for massive parallelism and sustained performance. This means dense GPU clusters and accelerators working in concert, with power and cooling systems designed to handle consistent peak loads rather than sporadic peaks typical of legacy workloads.

The AI Factory Infrastructure Stack (2026 Verified)

Software and orchestration are equally important. AI factories embed continuous workflow pipelines that link data ingestion, model training, evaluation, and deployment in a loop, rather than as isolated steps. This reduces idle time and ensures resources are used efficiently across the entire lifecycle.

Industry practitioners also emphasize that AI factories support real-time resource scaling and automation, enabling systems to reallocate compute dynamically based on workload demands, a contrast with static provisioning in traditional centers.

In essence, an AI factory is less a place and more a production ecosystem: engineered for uninterrupted AI work, from data flow to inference output.

Who’s Building AI Factories? Inside the Industry Shift

The move toward AI factories is being led by hyperscalers and AI infrastructure vendors that are redesigning data centers around continuous AI workloads rather than traditional cloud computing patterns.

Microsoft is heavily investing in AI-optimized infrastructure through Azure, focusing on large-scale distributed training systems designed for foundation models and high-performance AI workloads.

This reflects a structural shift from general-purpose cloud computing toward AI-dedicated compute environments.

Google is advancing a similar direction through its AI Hypercomputer architecture, which integrates compute, storage, and networking into a unified system optimized for large-scale model training.

This architecture is explicitly designed to reduce bottlenecks in AI training pipelines and improve system-wide efficiency.

At the hardware layer, NVIDIA provides the dominant reference architecture for AI factories through its end-to-end AI infrastructure stack, combining GPUs, networking, and software orchestration into a unified platform.

Beyond hyperscalers, the ecosystem is gradually transitioning, but most deployments outside top-tier providers are still in partial or staged implementations rather than fully integrated AI factory systems.

Infrastructure Maturity Metrics (2026)

The industry is therefore split between organizations defining the architecture and those still adapting to it.

Are AI Factories the Future of Data Centers?

AI factories are not just a rebranding of hyperscale data centers, but neither are they an entirely separate category. They represent a natural evolution, driven by the unique demands of AI workloads.

Traditional hyperscale facilities were designed for flexibility, handling diverse applications with varying compute needs. In contrast, AI factories are purpose-built for continuous, high-intensity processing, where infrastructure is optimized for training, inference, and rapid iteration. This shift toward tightly integrated, production-style systems reflects a deeper change in how compute is consumed.

However, this transformation is not universal, at least not yet. Building a true AI factory requires significant investment in specialized hardware, advanced cooling, high-bandwidth networking, and orchestration systems. For many operators, especially outside hyperscale environments, adopting elements of this model will be more practical than fully transitioning to it.

The most likely outcome is a hybrid future. Hyperscalers and AI-native companies will continue to develop full-scale AI factories, while the broader industry integrates selective components of this architecture based on workload needs.

In that sense, AI factories are both real and evolutionary. They are not replacing data centers but redefining what the most advanced ones are becoming.