Generative AI didn’t just increase demand for compute; it made it unpredictable.

For years, data center architecture evolved around relatively stable workloads. Enterprises could forecast growth, scale infrastructure in phases, and optimize around efficiency. But generative AI has disrupted that model entirely. Training large language models requires massive, concentrated bursts of compute, while inference workloads introduce continuous, latency-sensitive demand at scale. The result is a new kind of pressure, one that traditional data centers were never designed to handle.

This shift is not just about more servers or higher utilization. It is about how compute is organized, powered, and cooled. GPU clusters now dominate over CPU-based systems, power densities are rising beyond conventional thresholds, and cooling systems are being pushed to their limits. What once worked as a predictable, incremental scaling model is now struggling to keep pace with workloads that can expand dramatically in a matter of months.

The industry is no longer asking how to scale data centers.

It is asking whether the architecture itself needs to be rethought.

Were Data Centers Ever Designed for AI Workloads Like This?

Modern data centers were not built with generative AI in mind; they were optimized for predictable, CPU-driven workloads.

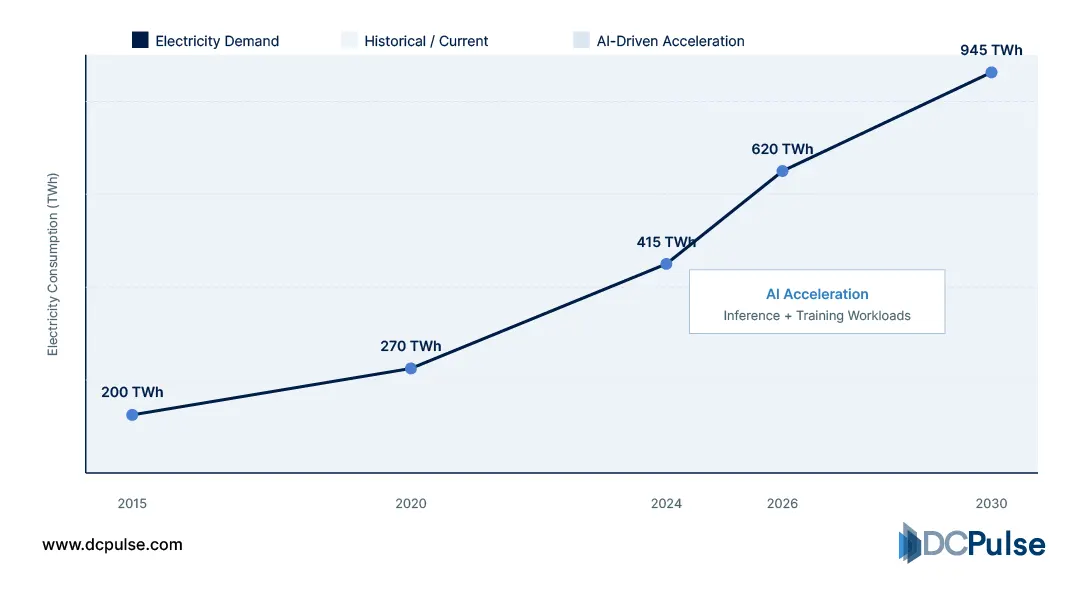

Traditional architectures evolved around steady enterprise and cloud demand, where compute loads were distributed and scaled incrementally. But that assumption is now breaking. According to the International Energy Agency, data centers consumed around 415 TWh of electricity in 2024 (~1.5% of global demand), with consumption growing at ~12% annually, increasingly driven by AI workloads.

Global Data Center Electricity Demand Growth

Generative AI introduces a fundamentally different workload profile. Training requires massive, concentrated GPU clusters, while inference creates continuous demand. This is pushing infrastructure away from distributed compute toward dense, tightly coupled systems.

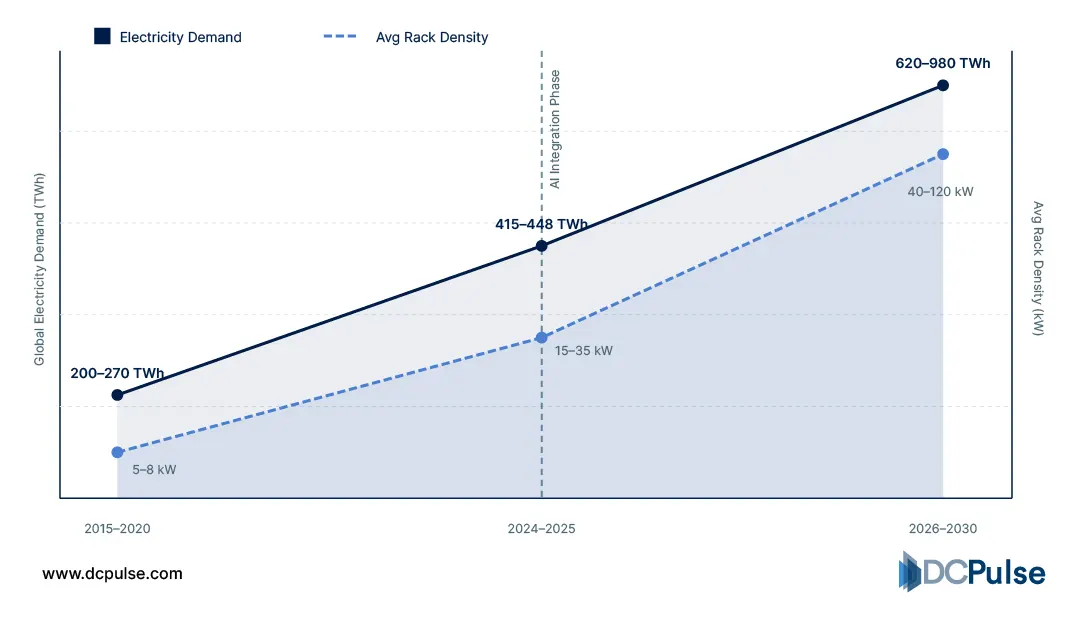

This shift is most visible in power density. Traditional racks operated at 5-10 kW, but AI systems now routinely exceed 30-100 kW per rack, with some next-generation designs going even higher.

Rack Power Density Evolution (2015-2030)

The scale of demand is also changing rapidly. According to McKinsey & Company, global data center capacity demand could grow 19-22% annually through 2030, driven largely by AI adoption, with power requirements scaling accordingly.

What makes this more challenging is not just scale, but behavior. AI workloads run at near-full utilization for extended periods and can introduce rapid fluctuations in power demand, making infrastructure planning far more complex than traditional steady-state environments.

The result is a clear mismatch.

Data centers were designed for steady, predictable scaling, but generative AI introduces extreme density, continuous load, and dynamic demand patterns that existing architectures struggle to accommodate.

What Needs to Change Inside the Data Center to Support Generative AI?

Supporting generative AI is not about incremental upgrades; it requires fundamental architectural changes across compute, power, cooling, and interconnects.

The first shift is in compute design. AI workloads rely on tightly coupled GPU clusters, where performance depends on high-bandwidth interconnects and parallel processing rather than distributed CPU systems. According to McKinsey & Company, AI-driven demand is pushing data centers toward architectures optimized for accelerated computing and high-performance networking.

Power density is the most immediate constraint. Traditional racks operated at 5-10 kW, but AI deployments are pushing far beyond that. Uptime Institute highlights that rising rack densities are forcing major changes in facility and electrical design.

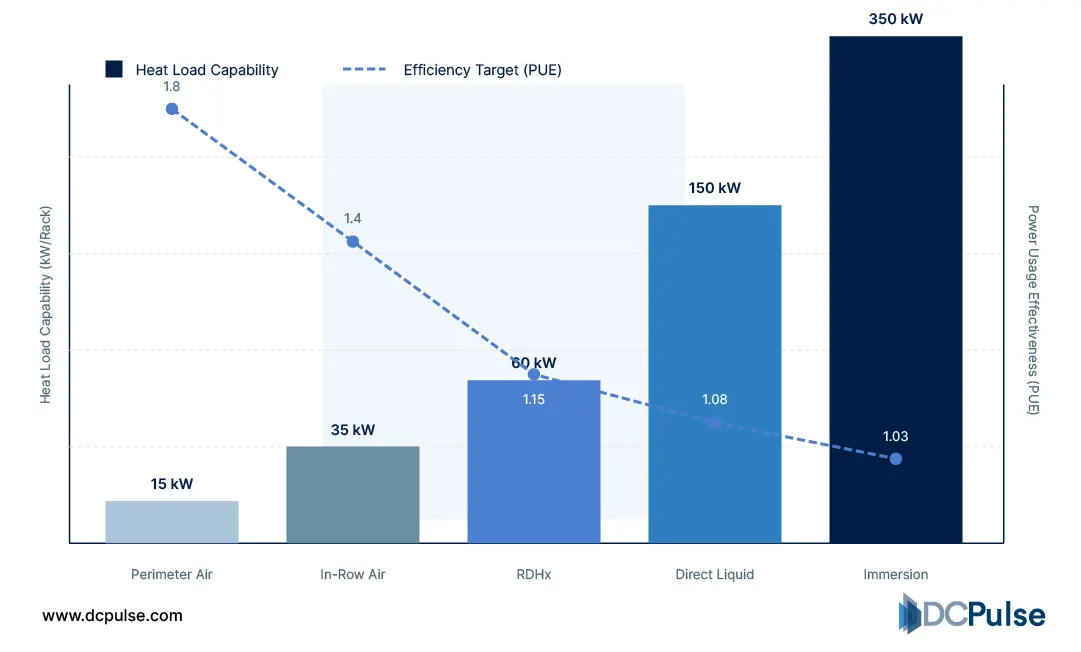

Cooling systems are also under pressure. As densities rise, air cooling approaches its limits, making liquid cooling increasingly necessary. Research from Lawrence Berkeley National Laboratory shows that advanced cooling technologies are required to manage modern data center heat loads.

Data Center Cooling Capability vs. Heat Load

AI workloads also introduce high and variable power demand, complicating infrastructure planning. A study on arXiv shows that AI systems generate more dynamic power profiles than traditional workloads.

The shift is clear: data centers are being redesigned for density, throughput, and dynamic performance, not just efficiency.

Who Is Actually Rebuilding Data Centers for AI, and How?

The shift toward AI-ready infrastructure is no longer theoretical; massive, real-world investments and deployments are driving it.

Hyperscalers are at the center of this transformation. Microsoft is building purpose-built AI data centers such as its “AI factory” facilities, deploying hundreds of thousands of AI chips and investing tens of billions into integrated infrastructure across regions.

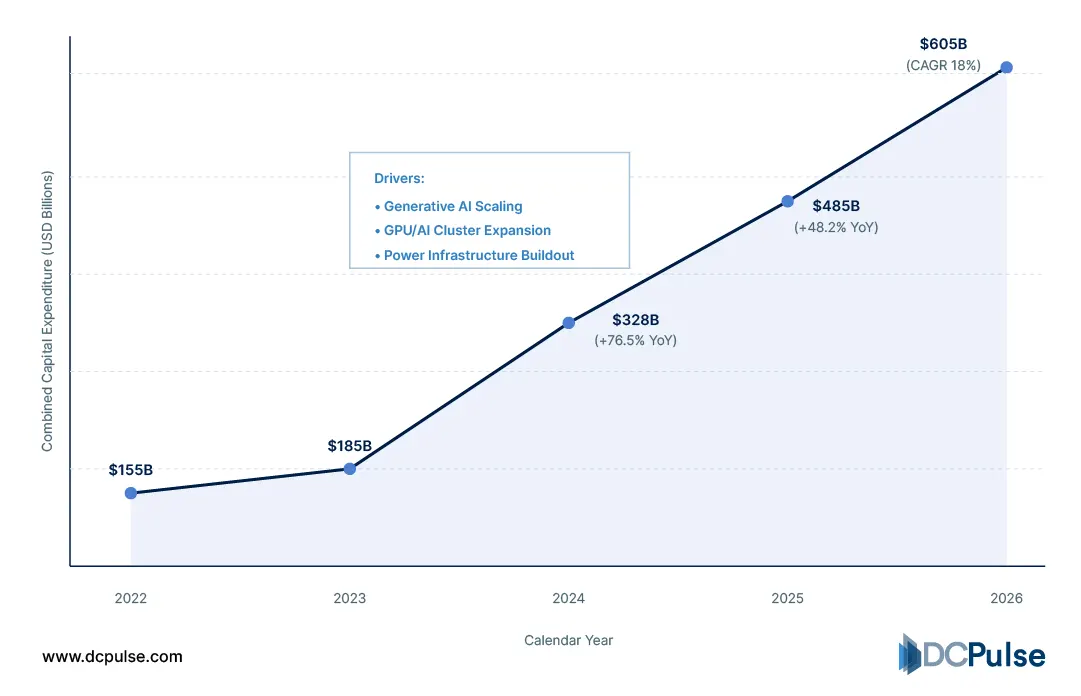

Hyperscaler Combined AI Capex Growth Data Table (2022–2026)

At an industry level, this expansion is accelerating rapidly. Hyperscalers like Microsoft, Google, and AWS are expected to account for ~67% of global data center capacity by 2031, reflecting the concentration of AI demand in infrastructure ownership.

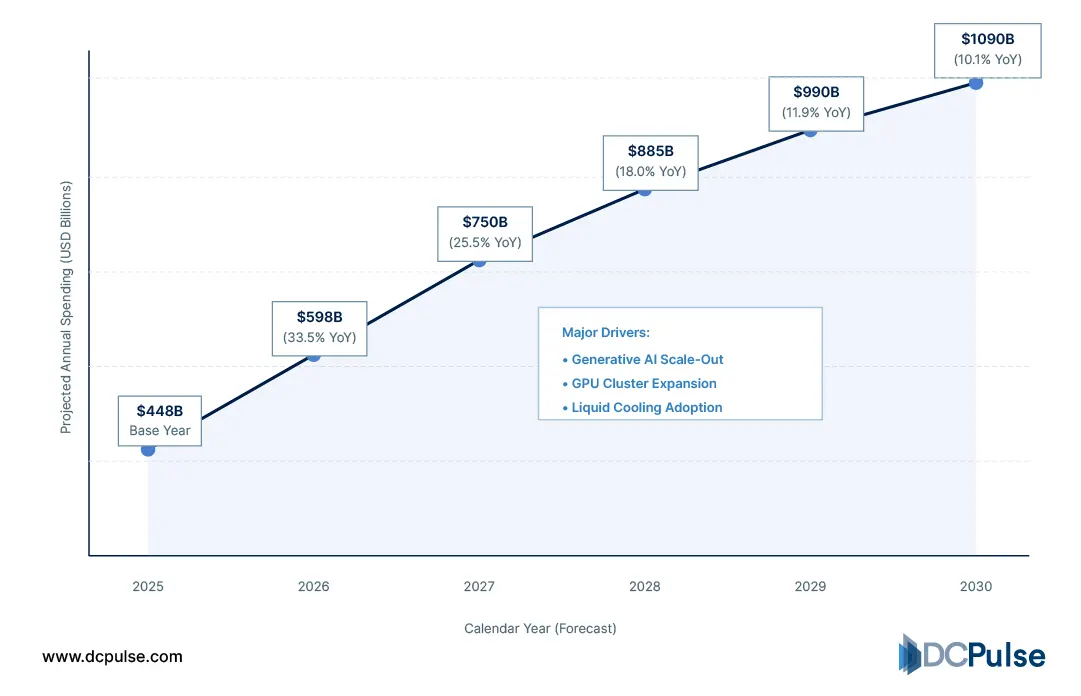

The scale of investment is unprecedented. Industry estimates suggest that USD 3-4 trillion could be spent on AI infrastructure by the end of the decade, highlighting the magnitude of this buildout.

Projected Global AI Infrastructure Spending (2025-2030)

Partnership models are also evolving. Companies are increasingly relying on specialized infrastructure providers and joint ventures to scale AI capacity faster. For example, large-scale agreements between hyperscalers and infrastructure firms are enabling multi-gigawatt AI deployments without fully owning all assets.

What stands out is the shift from experimentation to execution.

AI is no longer just influencing data centers; it is driving coordinated, capital-intensive infrastructure transformation at a global scale.

Is Generative AI Forcing a Complete Architectural Reset?

Generative AI is not simply scaling existing data center models; it is exposing their limits.

The rapid rise in compute density, power demand, and cooling requirements suggests that traditional architectures are no longer sufficient on their own. AI workloads are forcing operators to rethink how infrastructure is designed, shifting focus toward performance, density, and energy alignment rather than incremental efficiency gains.

At the same time, this is not a complete replacement. Traditional data centers still provide advantages in large-scale, stable environments where predictability and cost optimization matter. What is emerging instead is a hybrid architecture, where AI-optimized clusters coexist with conventional infrastructure, each serving different workload types.

This shift introduces new constraints. Power availability is becoming a primary bottleneck, cooling technologies must evolve rapidly, and interconnect performance is now critical to overall system efficiency. These factors will shape how future facilities are designed and where they can be deployed.

The takeaway is clear:

Generative AI is not just changing workloads; it is redefining the design priorities of data centers, pushing the industry toward architectures that are built for intensity, not just scale.