For most of the history of data centers, growth was treated as a future problem.

You built capacity for today, left some empty floor space, and hoped tomorrow would fit inside the building.

Hyperscalers changed the premise.

They don’t expand facilities. They design facilities that are meant to keep changing.

In a hyperscale campus, the first deployment is not the finished data center; it is the starting configuration of a system that will be rebuilt many times without ever stopping. Power blocks are added before demand arrives. Cooling loops are sized for equipment generations that do not yet exist. Entire halls are planned around replacement cycles instead of occupancy.

The building is no longer the unit of infrastructure.

The lifecycle is.

What looks oversized on day one becomes normal by year three and insufficient by year six — and the design already knows that. Scalability is no longer about making room. It is about making a change in routine.

Modern facilities are not growing bigger.

They are learning how to stay unfinished on purpose.

Why Traditional Data Centers Struggle to Scale for AI

For decades, most data centers were designed around predictable growth. You estimated future demand, built electrical and cooling capacity around that forecast, and expanded only when utilization approached a limit. That model worked when compute loads changed slowly.

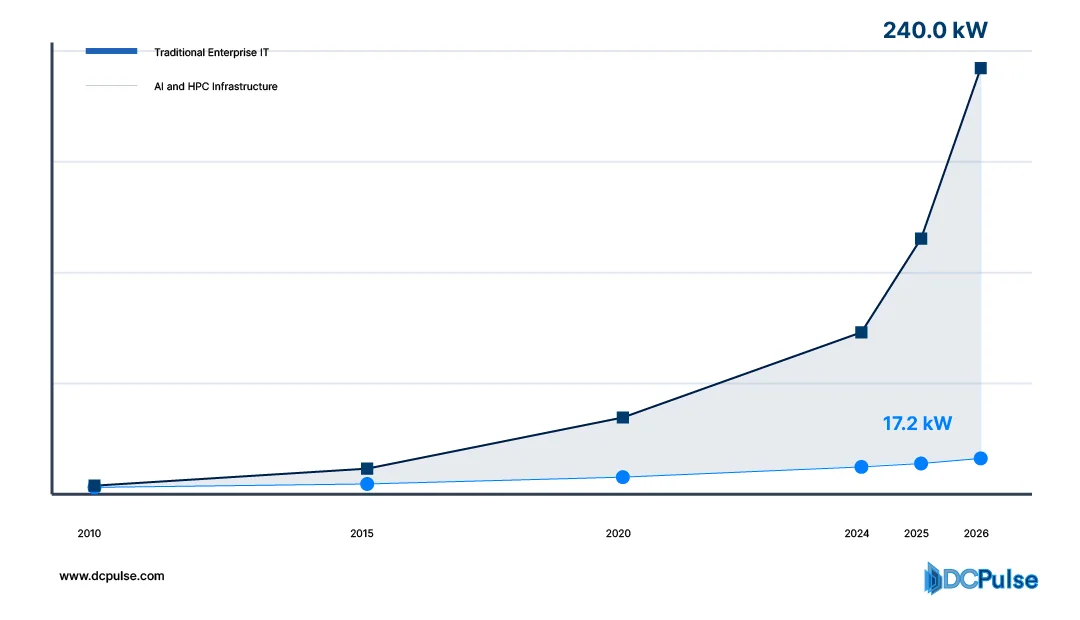

AI workloads changed the pace.

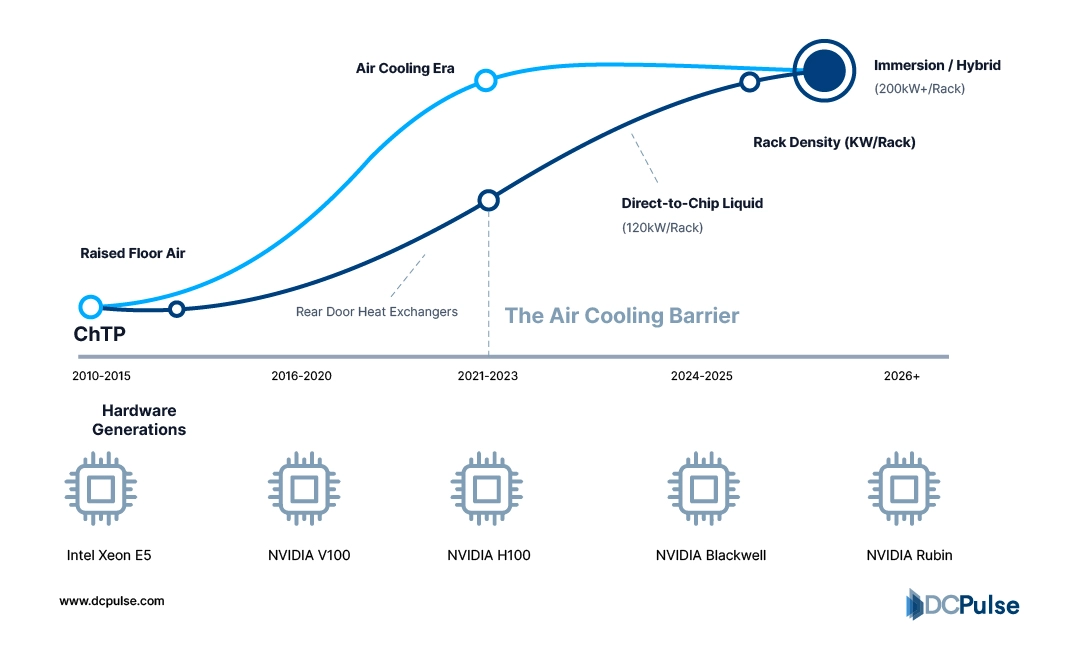

Modern accelerator systems dramatically increased rack density. NVIDIA explains that AI training infrastructure now operates at power levels far above traditional enterprise environments and requires new approaches to power delivery and cooling. The Uptime Institute likewise reports operators moving beyond legacy density ranges as AI and high-performance computing deployments expand.

Rack Density Progression (2010-2026)

The consequence is not just higher heat. It is architectural rigidity. Traditional facilities embed assumptions into the building. Airflow is designed for uniform racks, electrical distribution is sized for steady growth, and cooling systems are optimized for one equipment generation. When density shifts unevenly, which AI clusters consistently do, operators must retrofit live halls with containment systems, rear-door heat exchangers, or liquid cooling overlays.

Many operators are introducing liquid cooling into existing air-cooled environments because legacy design envelopes are exceeded.

This creates a scaling paradox. Capacity technically exists, but it cannot be used without modification. Hyperscale environments avoided this by planning for replacement rather than occupancy. Instead of filling a room once, infrastructure is staged in power and cooling blocks so new hardware generations can be deployed without rebuilding the facility. Microsoft describes this modular capacity approach as enabling continuous addition of infrastructure without disrupting live services.

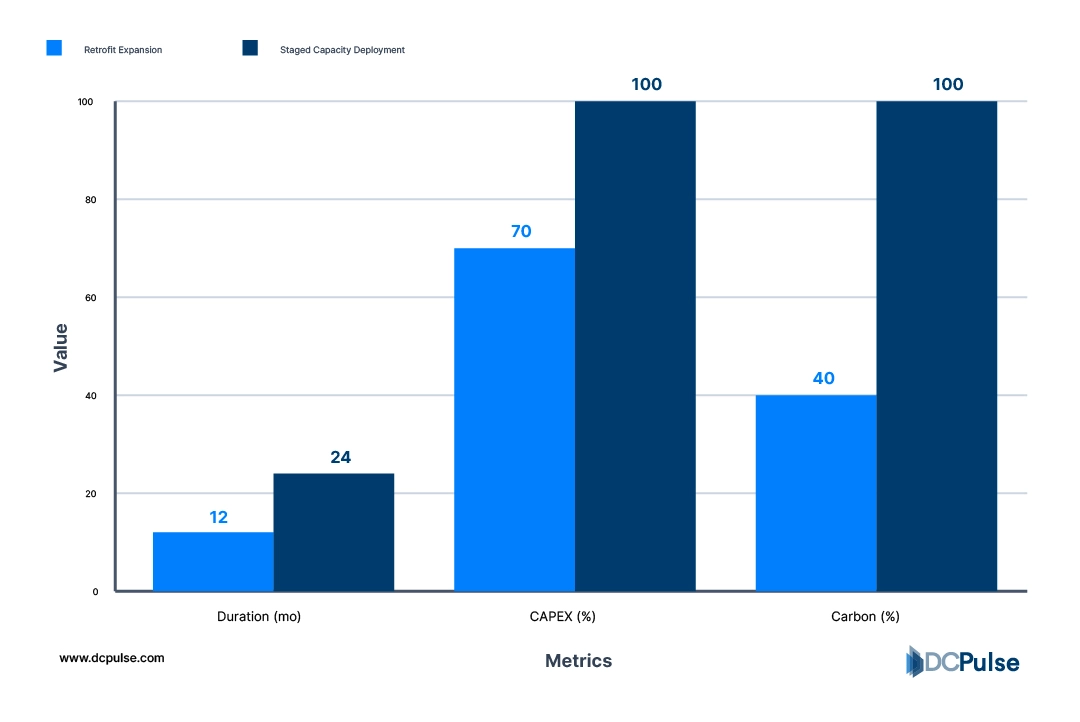

Retrofit Expansion vs Staged Capacity Deployment

The difference defines today’s landscape. Traditional facilities scale until redesign becomes necessary, while hyperscale facilities are designed so redesign never interrupts operation. Scalability is no longer about reserving empty space. It is about designing buildings that expect change.

Infrastructure Designed to Be Rebuilt

Hyperscale facilities do not treat upgrades as events. They treat them as routine.

The key change is that infrastructure layers are separated so each can evolve independently. The building shell lasts decades, the electrical backbone lasts many years, and the compute layer is expected to rotate frequently. Instead of protecting the data center from change, the design organizes change.

Power is the clearest example. Rather than installing electrical capacity only when racks arrive, hyperscale operators deploy capacity in staged blocks that exceed the first IT load. Google describes its campus power architecture as modular units that can be expanded without interrupting operating systems. This allows new clusters to come online without rewiring live rooms.

Cooling follows the same philosophy. Instead of a single airflow strategy, distribution is built as a transport system capable of supporting multiple thermal methods across hardware generations. Meta explains its data centers are engineered so new cooling approaches can be introduced as hardware changes, rather than rebuilding the facility each time.

Hardware Generations vs. Cooling Transitions

This shifts the meaning of capacity. A traditional facility reaches full capacity when space and power are consumed. A hyperscale facility reaches full capacity when replacement stops — which ideally never happens. Infrastructure is planned around turnover cycles, not occupancy.

Microsoft notes its modular deployment strategy allows incremental expansion aligned to demand instead of periodic large construction phases.

Scalability, therefore, becomes operational behavior. The facility is not expanded occasionally; it is continuously renewed.

How Operators Are Changing the Way They Build

The shift toward scalable design is no longer limited to hyperscalers. It is now influencing how colocation providers and enterprise operators plan capacity, contracts, and construction timelines.

Instead of building a single large facility and filling it over years, operators increasingly construct campuses in repeatable phases. AWS explains its data center regions are developed as clusters of multiple buildings added over time rather than a single finished structure, allowing infrastructure to grow alongside demand. The facility becomes a platform for deployment rather than a completed project.

This approach changes procurement behavior. Hardware is no longer purchased only when space exists; buildings are prepared so equipment generations can be swapped continuously. Supply chains are aligned to refresh cycles rather than construction milestones. Google has described aligning server deployment schedules to ongoing operational turnover rather than periodic replacement waves.

Colocation providers are adopting similar models to support AI tenants whose demand arrives in large increments. Instead of allocating fixed suites, providers increasingly deliver expandable power blocks that customers grow into over time. Industry analysis from the Uptime Institute notes operators are moving toward flexible capacity delivery because AI workloads appear in sudden, high-density clusters rather than gradual adoption.

What emerges is a different construction philosophy. The building is no longer delivered once and operated afterwards. Construction, deployment, and refresh become overlapping processes.

Data centers are starting to operate less like real estate and more like infrastructure supply chains, continuously extended, continuously replaced, and rarely finished.

Designing for Change, Not Capacity

Scalability used to mean preparing for growth. Hyperscale design reframes it as preparing for change.

Once facilities are built around replacement cycles instead of occupancy, individual servers stop defining the infrastructure. Hardware becomes temporary, while power paths, cooling distribution, and deployment processes become the permanent system. The data center behaves less like a building that hosts equipment and more like an operating environment that continuously regenerates it.

This shift carries a strategic implication. Capacity planning moves away from forecasting demand and toward designing tolerance for uncertainty. Operators no longer need to predict the exact hardware generation or density five years ahead; they need the ability to absorb whatever arrives.

That is why modern large-scale facilities rarely appear “complete.” Their efficiency comes from remaining adaptable rather than being finished. Expansion, refresh, and construction blur into a single operational rhythm.

The server does not literally disappear. But its importance as the planning unit does. What matters instead is whether the facility can replace everything inside it without ever pausing service.

Scalability, in the hyperscale era, is not the ability to grow bigger.

It is the ability to keep changing indefinitely.