For years, 10-15 kW per rack defined the upper limits of data center density. Even as workloads scaled, most facilities pushed cautiously, 20 kW in advanced deployments and 30 kW in highly specialized environments. That steady progression has now been disrupted. Driven by AI and accelerated computing, 100kW per rack is rapidly emerging not as an outlier, but as a new baseline for next-generation infrastructure.

This is not a gradual evolution; it’s a structural shift. Modern AI clusters, densely packed with GPUs and custom silicon, are concentrating massive compute power into increasingly compact footprints. What once required entire rows can now fit within a single rack, bringing with it unprecedented thermal and power challenges.

The implications are immediate. Traditional air cooling is reaching its physical limits, power delivery architectures are being reworked, and operators are being forced to rethink how racks are designed and deployed. Hyperscalers are already moving ahead, while colocation providers race to adapt.

The question is no longer whether 100 kW racks will define the future.

It’s how quickly the rest of the data center ecosystem can keep up.

Where Rack Density Stands Today?

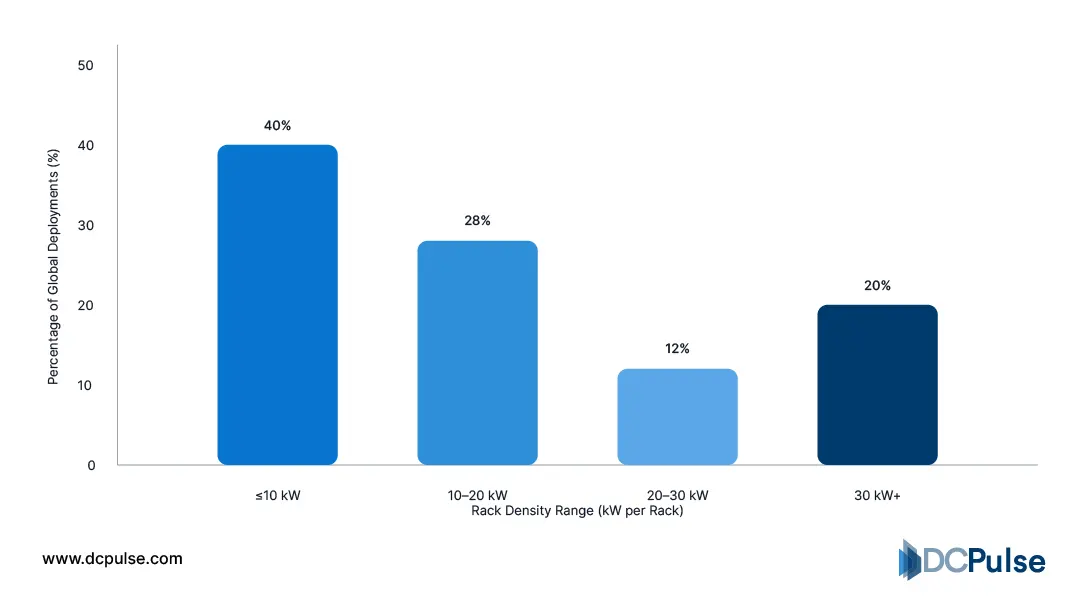

Despite the growing buzz around 100kW racks, today’s data center reality is far more grounded and uneven. Most facilities globally are still operating at relatively modest densities, a reflection of infrastructure originally built for traditional enterprise workloads rather than AI-scale compute.

According to research from Uptime Institute, rack densification is happening, but not at the pace many assume. A significant portion of data centers continues to run well below high-density thresholds, with upgrades occurring gradually rather than through large-scale transformation.

Global Rack Density Distribution (2025/2026)

Data from SDxCentral provides a clearer breakdown of this spread. While densities have increased over time, the majority of deployments still fall below 30kW. Only a small fraction of racks operate at 40kW or higher, and systems exceeding 50kW remain a minority.

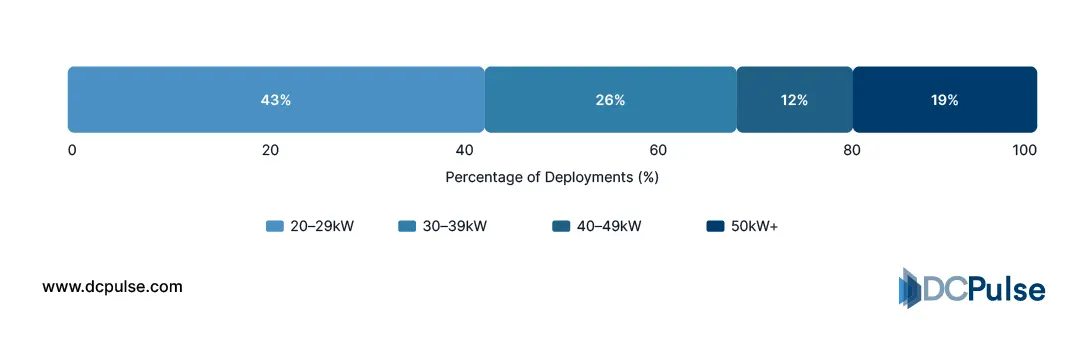

Global High-Density Rack Distribution by Tier (20kW+) – (2026)

At the same time, a distinct shift is underway at the top end. As noted by Data Center Frontier, AI and GPU-driven workloads are pushing newer deployments into the 20-40kW range, with even rack densities emerging in specialized environments.

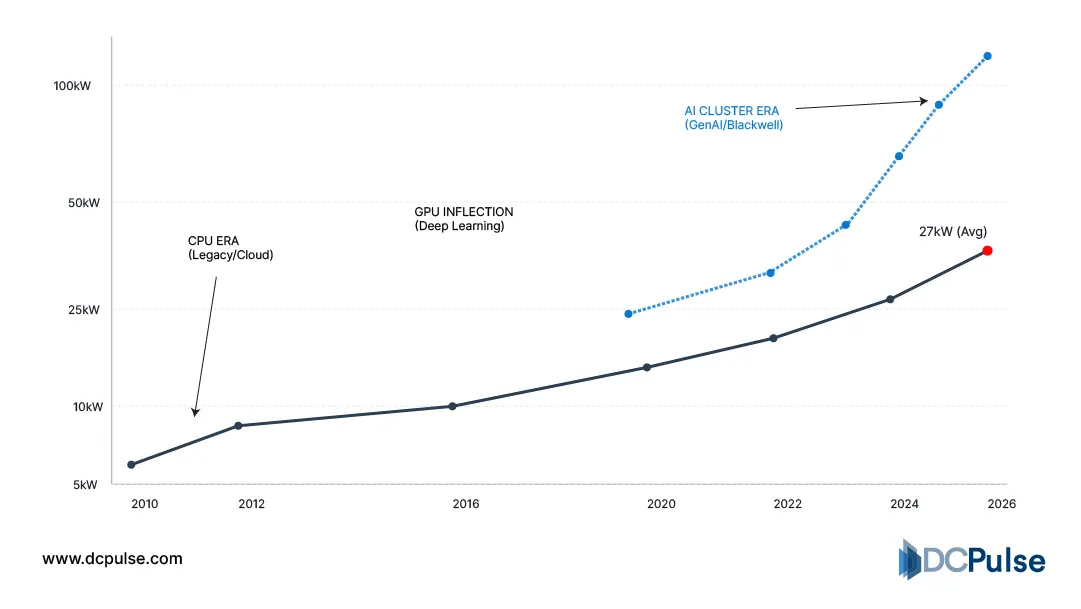

Rack Density Evolution vs. Compute Trends (2010–2026)

This creates a split landscape. On one side, legacy and enterprise facilities remain anchored in lower-density configurations, constrained by power delivery limits and air-cooling capacity. On the other hand, AI-focused deployments are rapidly advancing toward 50kW, 80kW, and beyond, often requiring entirely new facility designs rather than retrofits.

The result is not a single industry standard but a widening spectrum. While 100kW racks are entering production in cutting-edge environments, they sit at the far edge of a market where most infrastructure is still catching up.

What are the technologies behind the 100kW rack?

At 100kW, a rack is no longer just an enclosure; it becomes an integrated system where compute, cooling, and power are engineered together. The biggest constraint at this level is heat. Traditional air cooling, effective at lower densities, struggles to remove the thermal load generated by modern AI hardware, making it increasingly impractical.

This is why liquid cooling is now central to high-density design. NVIDIA highlights that next-generation AI systems are being built with liquid-cooled architectures to handle extreme rack-level power loads.

Direct-to-chip cooling, where coolant flows directly to GPUs and CPUs, significantly improves heat transfer efficiency. In more advanced scenarios, immersion cooling is also being explored to manage even higher densities.

Power delivery is evolving in parallel. At these densities, conventional rack power systems are insufficient, pushing operators toward higher-capacity distribution models such as overhead busways and integrated rack-level power systems.

Vertiv notes that AI workloads are driving a fundamental redesign of power and thermal infrastructure within the rack.

The result is a clear shift: the 100kW rack is no longer a passive container but a tightly engineered platform built for extreme performance.

Who’s Building for It? Inside the Industry Push

The shift toward high-density racks is no longer conceptual; it is being actively deployed, led by hyperscalers building AI-first infrastructure at scale. What distinguishes this phase is not just adoption, but the speed at which cooling and power strategies are being redefined.

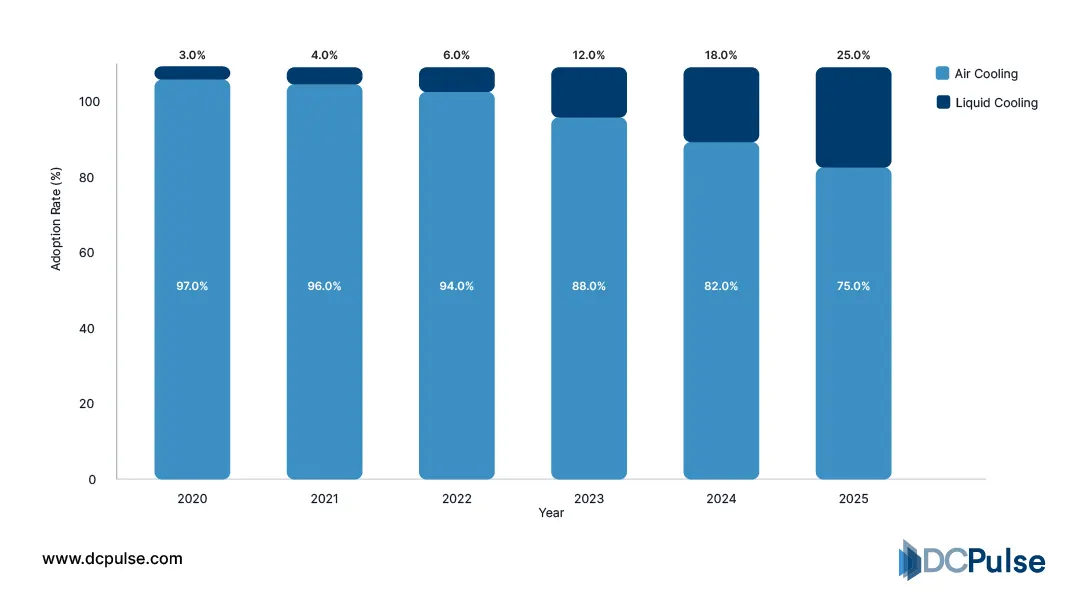

At Microsoft, recent AI data center developments are being designed around liquid cooling as a baseline rather than an upgrade. The company has acknowledged that traditional air-cooling struggles to support the thermal intensity of modern AI hardware, prompting a shift toward integrated liquid-cooled environments.

Hyperscale Data Center Cooling Adoption (Air vs. Liquid, 2020-2025)

A similar transition is visible at Google, where AI workloads have pushed infrastructure beyond conventional thermal limits. The company has progressively moved toward liquid cooling in high-performance environments, particularly as accelerator-based systems scale.

Across the broader industry, this shift is accelerating. High-density deployments are increasingly tied to GPU- and accelerator-heavy workloads, pushing rack densities upward and forcing a redesign of supporting infrastructure.

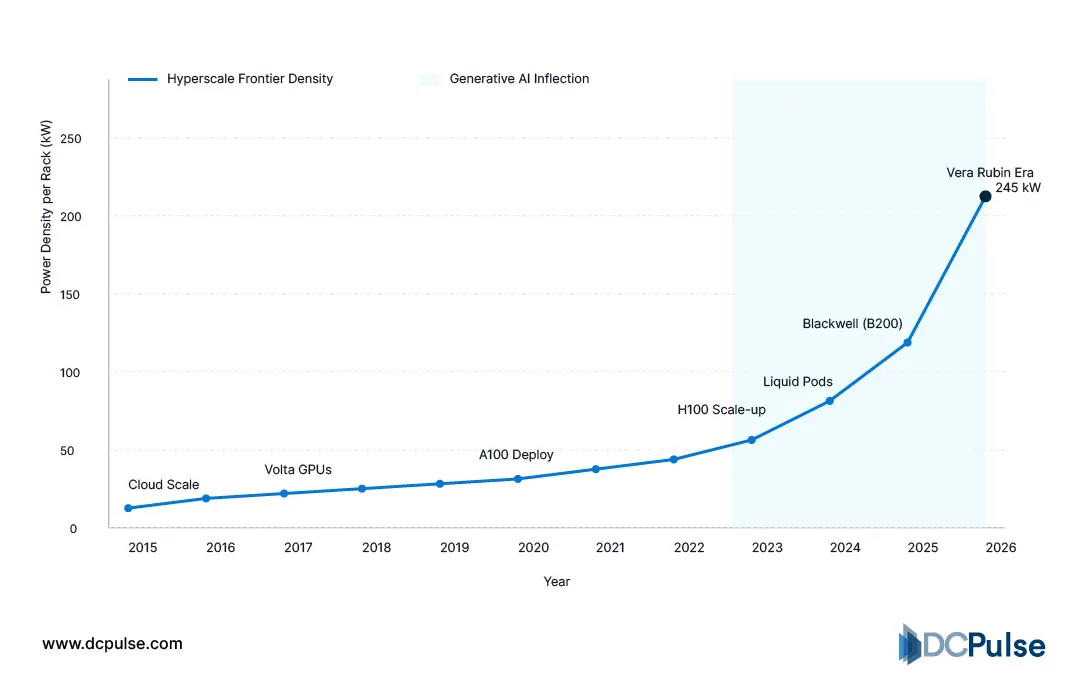

Hyperscale Rack Density Growth (2015–2026)

At the same time, not all operators are building from scratch. Many facilities are adapting incrementally, integrating liquid cooling into existing air-cooled environments to support higher-density racks without full redesigns.

At Microsoft, retrofit strategies include the use of liquid-to-air heat exchange systems to extend the life of existing infrastructure while accommodating AI workloads.

What emerges is a multi-speed industry. Hyperscalers are building AI-ready facilities from the ground up, while others are adapting in phases. Together, these approaches are accelerating the transition toward high-density racks, turning what was once an edge case into a defining infrastructure trend.

What Happens After 100kW?

If 100kW once seemed like a ceiling, it is now quickly becoming a stepping stone. The trajectory of AI infrastructure suggests that rack densities will continue to climb, with early signals already pointing toward 200kW and beyond in specialized deployments.

This next phase will not be defined by compute alone but by infrastructure limits. Power availability is emerging as a primary constraint, as delivering consistent, high-capacity energy at scale becomes increasingly complex. At the same time, liquid cooling, while effective, introduces new challenges around water usage, heat reuse, and operational complexity.

The industry also faces a structural decision. Hyperscalers are moving toward tightly integrated, custom-built systems optimized for extreme density, while colocation and enterprise providers must balance flexibility with performance. This creates tension between standardization and specialization, shaping how future facilities will be designed.

Ultimately, the shift beyond 100kW is less about reaching a number and more about redefining the data center itself. Facilities are evolving into highly engineered environments where power, cooling, and compute are inseparable and where scaling further will depend as much on infrastructure innovation as on silicon advancements.