AI is pushing data centers into a thermal crisis, and cooling is no longer just an operational concern; it has become the defining constraint of infrastructure design.

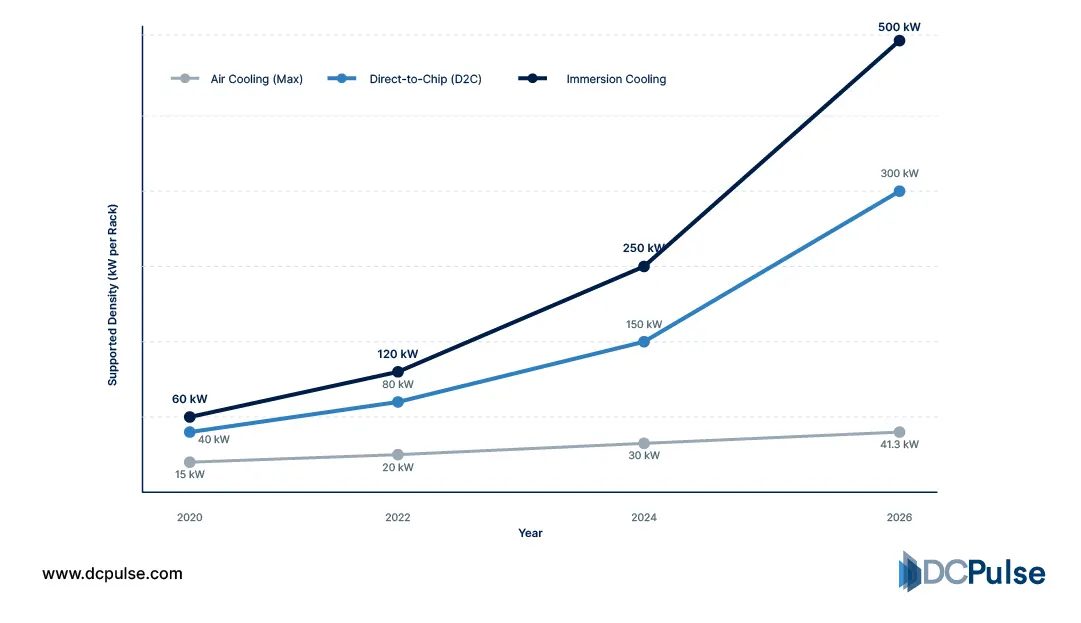

Rack densities that once hovered around 10-20kW are now surging past 100kW in AI environments, driven by GPU-heavy workloads and accelerated computing. At these levels, traditional air cooling simply cannot keep up, forcing the industry toward liquid-based solutions.

Two technologies have emerged at the center of this shift: immersion cooling and direct-to-chip cooling. Both rely on a liquid's superior ability to remove heat, but they approach the problem in fundamentally different ways. Immersion cooling submerges entire servers in dielectric fluid, enabling uniform heat dissipation across all components. In contrast, direct-to-chip cooling targets only the hottest elements, like CPUs and GPUs, using cold plates to extract heat at the source.

This divergence is more than technical; it reflects two competing philosophies of data center design. One favors precision and incremental evolution. The other pushes for a complete system-level transformation.

As AI infrastructure scales, the question is no longer whether liquid cooling will dominate.

Which approach will define the future?

Where Do Immersion and Direct-to-Chip Stand Today?

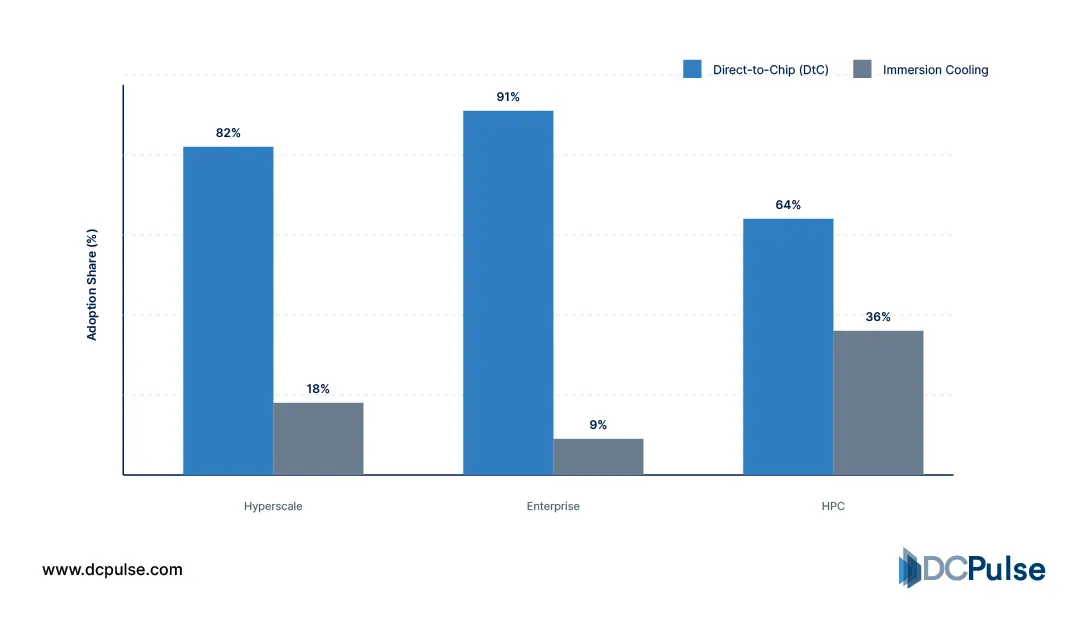

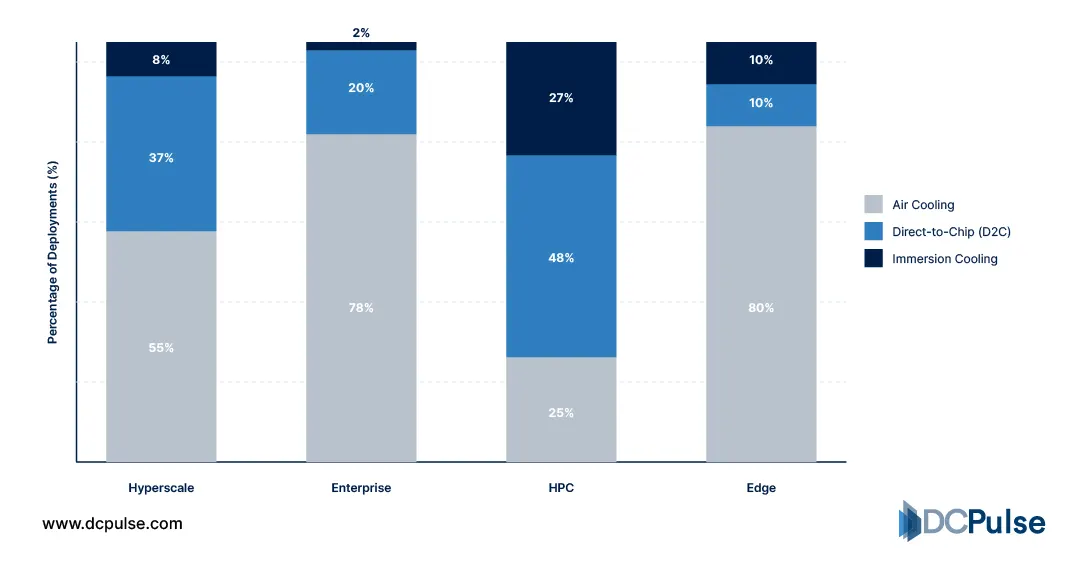

Liquid cooling is no longer experimental; it is actively being deployed as AI workloads push rack densities beyond the limits of air. However, the adoption of immersion cooling and direct-to-chip (D2C) is far from equal, reflecting differences in maturity, deployment complexity, and use cases.

Direct-to-chip cooling currently leads in real-world adoption, particularly in hyperscale and enterprise environments. By targeting high-heat components like CPUs and GPUs while leaving the rest of the system air-cooled, D2C allows operators to integrate liquid cooling into existing infrastructure without a complete redesign. This incremental approach makes it the preferred choice for retrofits and large-scale AI deployments. TechTarget highlights that liquid cooling methods such as D2C are increasingly adopted to handle higher rack densities while maintaining compatibility with traditional data center designs.

Adoption split - Direct-to-Chip vs. Immersion Cooling (2025-2026)

Immersion cooling, while gaining attention, remains more niche. It is primarily deployed in high-performance computing (HPC), edge environments, and specialized AI clusters where maximum thermal efficiency is required. Its ability to cool entire systems uniformly makes it highly effective at extreme densities, but it often requires purpose-built infrastructure and operational changes. arXiv research shows immersion cooling enables significantly higher density and improved energy efficiency compared to traditional approaches.

Supported Rack Density - Air vs D2C vs Immersion (kW per rack)

The result is a split landscape. Direct-to-chip is scaling rapidly due to its compatibility and lower barrier to adoption, while immersion is advancing in high-density niches where performance outweighs complexity.

Deployment Mix by Sector and Cooling Method (Q1 2026)

This divergence defines the current state of AI data center cooling: one solution is optimized for integration and the other for maximum efficiency.

Inside the Technologies Shaping AI Cooling

The divide between direct-to-chip (D2C) and immersion cooling begins at the architectural level. Both rely on liquid’s superior heat transfer capabilities, but they differ fundamentally in how and where that cooling is applied.

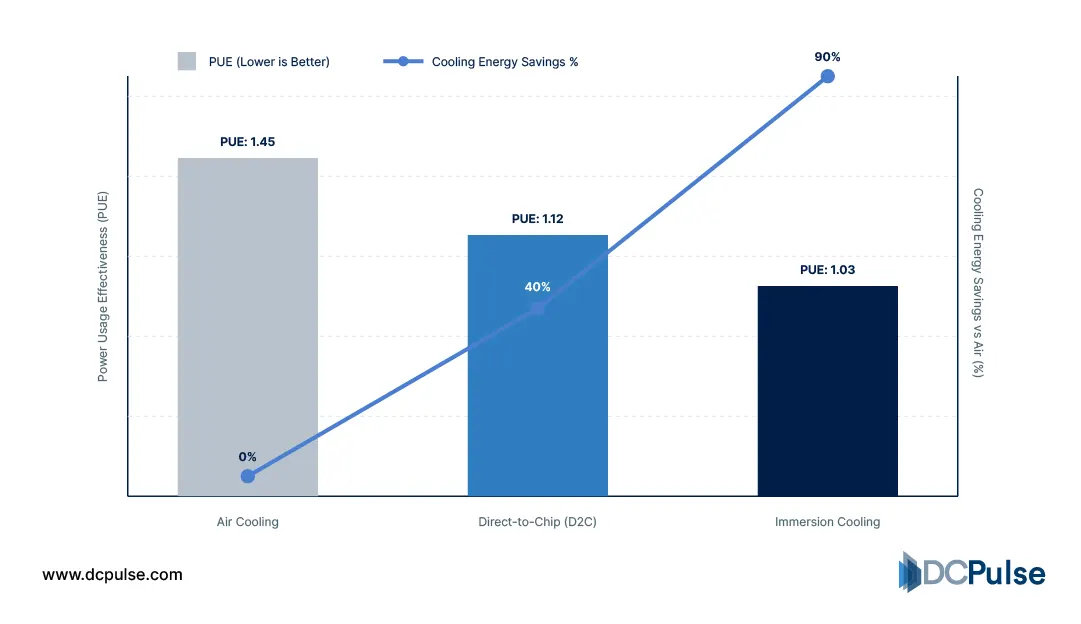

Direct-to-chip cooling uses cold plates mounted directly onto high-heat components such as CPUs and GPUs. Coolant circulates through these plates, extracting heat at the source before transferring it to a coolant distribution unit (CDU). The rest of the system, memory, storage, and networking, continues to rely on air cooling. This hybrid approach allows D2C to integrate with existing server designs while significantly improving thermal efficiency where it matters most. TechTarget notes that liquid cooling systems like D2C offer far greater heat transfer efficiency than air, making them suitable for high-density workloads.

Immersion cooling takes a more radical approach by submerging entire servers in dielectric fluid. Heat is absorbed uniformly across all components and dissipated through either single-phase or two-phase systems. This eliminates hotspots and reduces reliance on airflow entirely, enabling higher density and improved energy efficiency. Research indicates immersion systems can cut cooling energy consumption by up to 50% compared to traditional methods. arXiv

Cooling Efficiency Comparison (2025–2026)

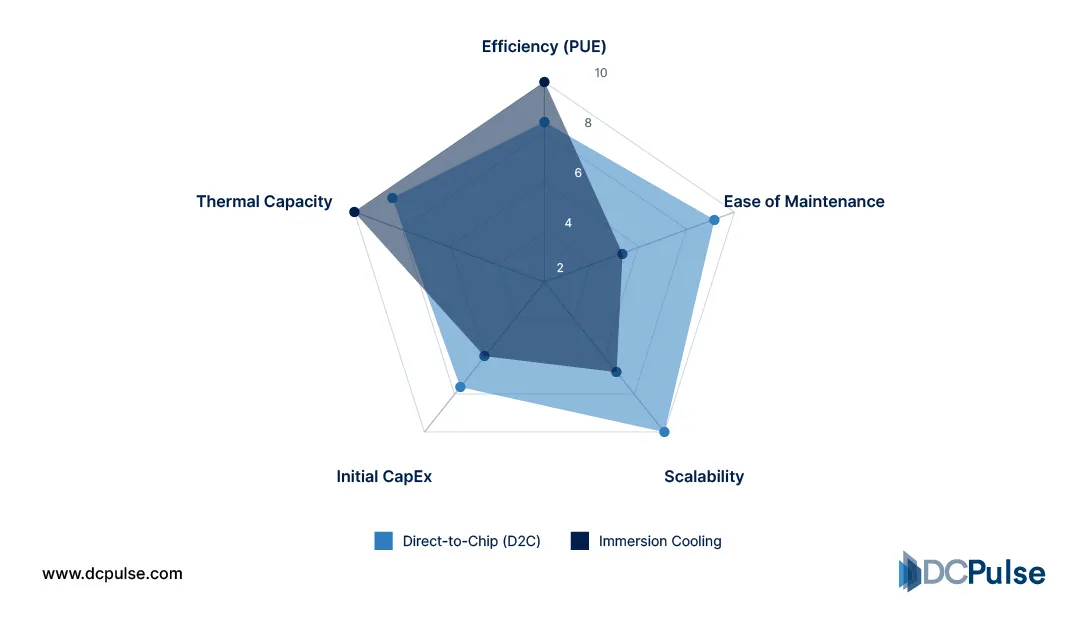

The trade-offs emerge in scalability and operations. D2C is easier to deploy at scale because it preserves familiar server architecture and maintenance workflows. Immersion, while thermally superior, introduces challenges in hardware compatibility, servicing, and facility design.

D2C vs Immersion - Complexity vs. Efficiency vs. Scalability

In essence, D2C optimizes cooling where heat is concentrated, while immersion redefines the entire thermal environment, representing two distinct paths toward managing extreme AI workloads.

Who’s Betting on What? Inside the Industry Divide

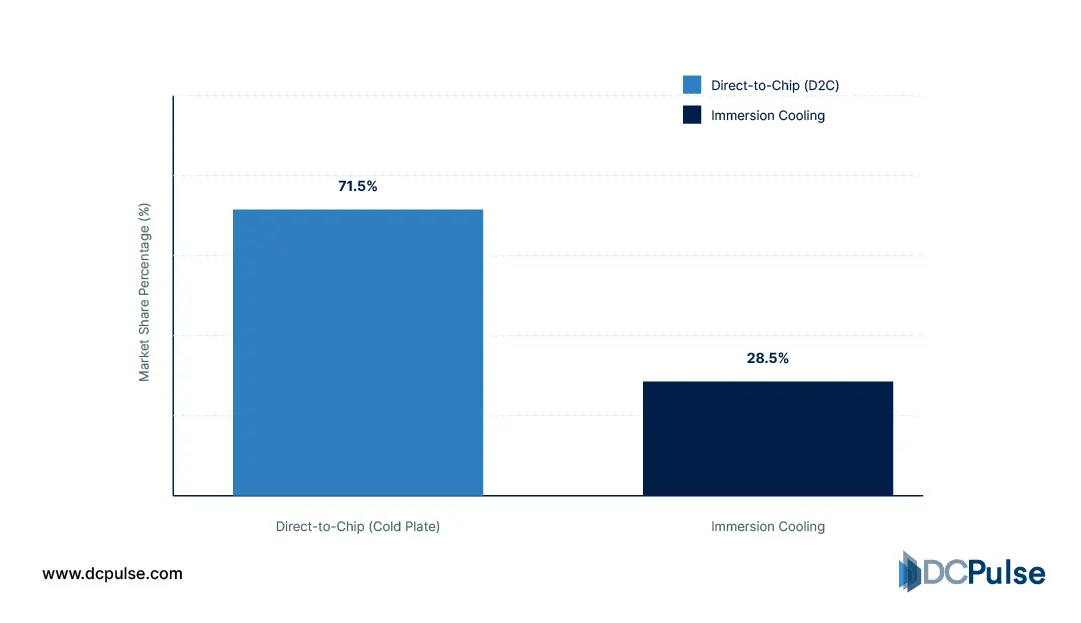

The industry’s approach to AI cooling is no longer uniform; it is splitting along lines of scalability, risk, and long-term strategy. While both direct-to-chip (D2C) and immersion cooling are advancing, their adoption patterns reveal a clear divide.

Direct-to-chip currently holds the dominant position, particularly among hyperscalers. Market analysis shows it remains the largest contributor to liquid cooling deployments, driven by its compatibility with existing data center infrastructure and its ability to scale alongside GPU-heavy workloads. GlobeNewswire highlights that hyperscalers, including major cloud providers, are investing heavily in liquid cooling, with D2C forming the backbone of these deployments.

Global Liquid Cooling Market Share (2026 Estimates)

This trend is reinforced by infrastructure realities. As GPU power densities rise, hyperscalers are scaling D2C systems across facilities rather than redesigning them entirely. Industry insights show that D2C is becoming a necessity for supporting next-generation AI hardware at scale. Data Center Dynamics

Hyperscale D2C Adoption Share (2020-2026)

At the same time, immersion cooling is gaining momentum as the fastest-growing segment. While still less widely deployed, it is increasingly viewed as the solution for extreme-density environments where D2C may reach practical limits. Datacenters.com notes that immersion is transitioning from niche deployments toward broader hyperscale consideration.

Colocation providers, however, remain cautious. The need for flexibility across tenants makes immersion difficult to standardize, reinforcing D2C’s position as the more practical near-term solution.

What emerges is a clear industry pattern: D2C is scaling as the default, while immersion is advancing as the challenger, gaining ground where performance demands outweigh operational constraints.

Which Will Dominate AI Data Centers?

Direct-to-chip will dominate in the near term, but it won’t be the final answer.

Today’s AI data center expansion is driven by speed and scale, and D2C aligns perfectly with both. It allows operators to upgrade existing infrastructure, supports current server designs, and integrates seamlessly with the broader hardware ecosystem. For hyperscalers deploying massive GPU clusters, this compatibility makes D2C the most practical and economically viable choice over the next several years.

However, as rack densities continue to rise toward extreme levels, the limitations of partial liquid cooling will become more apparent. Immersion cooling, with its ability to manage heat uniformly across all components, offers a fundamentally more scalable solution for ultra-high-density environments. Its efficiency advantages position it as a strong contender for next-generation deployments where thermal constraints push beyond what D2C can handle.

The outcome is not a clean replacement but a phased transition. D2C will dominate mainstream AI infrastructure in the short to medium term, while immersion will expand in parallel, targeting the highest-density and most specialized workloads.

In the long run, the balance may shift, but for now, the future of AI data centers belongs to a hybrid path, led by direct-to-chip and challenged by immersion.