For more than a decade, cloud computing followed a clear geographic logic: applications ran in large centralized regions, and users connected to them across the internet. That model is now rapidly evolving. As digital services demand lower latency, higher bandwidth, and more localized data processing, hyperscale cloud providers are pushing infrastructure outward, closer to where data is created and consumed.

Companies such as Amazon Web Services, Microsoft, and Google are expanding their architectures beyond traditional cloud regions into metro locations, telecom networks, and distributed edge environments. These deployments blur the once-clear boundary between centralized cloud infrastructure and edge computing.

The result is a new infrastructure model where compute, storage, and networking resources can operate across a continuum, from hyperscale regions to localized edge zones, enabling applications that require real-time responsiveness and geographically distributed processing.

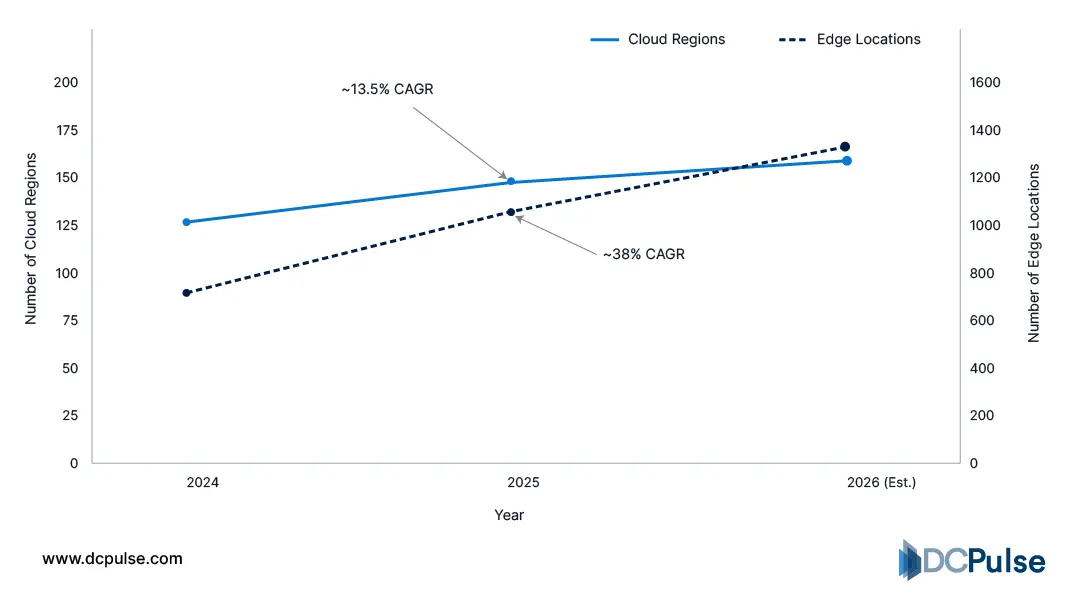

The Expanding Edge Footprint of Hyperscalers

For years, hyperscale cloud infrastructure was defined by large, centralized regions designed to deliver compute and storage at massive scale. That model is evolving as hyperscalers extend their infrastructure outward to metro locations and telecom networks to support workloads that require ultra-low latency and localized processing. Providers such as Amazon Web Services, Microsoft, and Google are increasingly deploying cloud services closer to end users through distributed edge environments.

Growth Comparison - Cloud Regions vs Edge Deployments (2024-2026)

One prominent approach is the creation of metro-based edge zones that extend core cloud services into major cities. For example, Amazon Web Services operates Local Zones, which place compute, storage, and database services closer to population centers to support latency-sensitive applications such as gaming, media production, and real-time analytics.

Similarly, Microsoft has introduced Azure Edge Zones, enabling cloud services to run within telecom networks or metro facilities. These deployments allow enterprises to place applications nearer to users while maintaining integration with the broader Azure platform.

Meanwhile, Google is expanding its Distributed Cloud portfolio, which enables organizations to run Google-managed infrastructure across edge locations and on-premises environments while remaining connected to Google’s global cloud network.

Together, these deployments illustrate a clear shift: hyperscale cloud providers are no longer confined to a single centralized region. Instead, they are building distributed infrastructure layers that bring cloud capabilities closer to users, networks, and data sources.

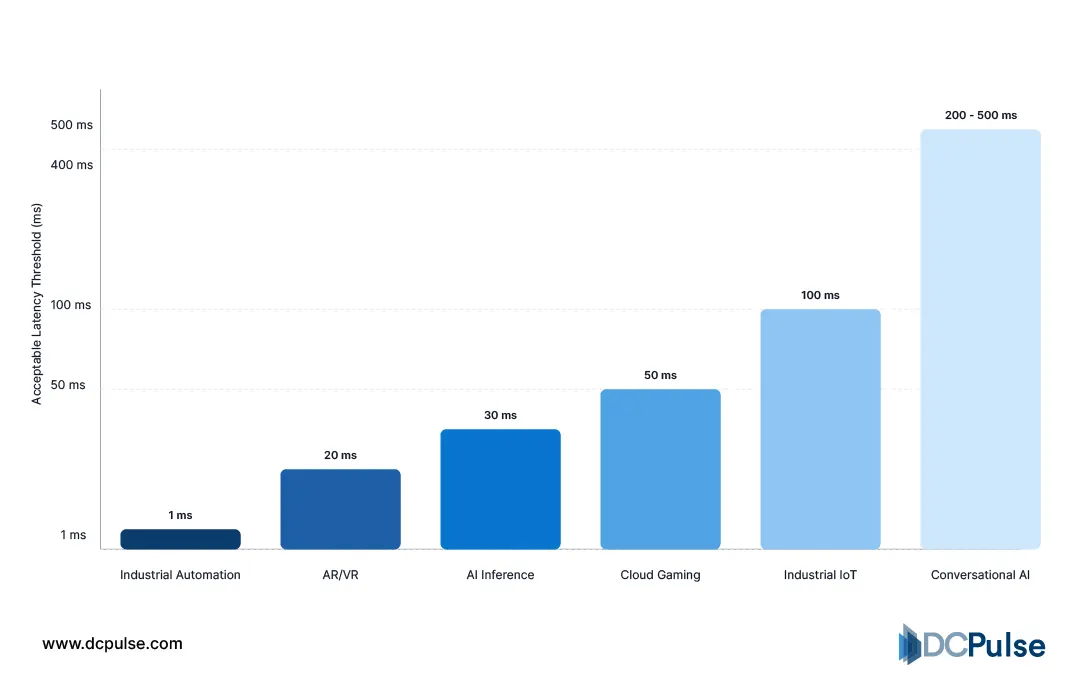

Why Are Hyperscalers Pushing Compute Closer to the Edge?

The primary driver behind hyperscaler edge expansion is latency. Applications such as real-time analytics, immersive media, industrial automation, and autonomous systems require response times measured in milliseconds. Processing data exclusively in distant cloud regions can introduce delays that make these applications impractical. By extending compute resources into metro locations and telecom networks, hyperscalers can dramatically shorten the distance between workloads and end users.

Latency Thresholds for Emerging Workloads

Another major factor is the integration of cloud infrastructure with 5G networks. Platforms such as AWS Wavelength, developed by Amazon Web Services, embed cloud services directly within telecom operator networks, allowing developers to build applications that require ultra-low latency and high bandwidth.

Distributed cloud models are also emerging as a new architectural paradigm. For example, Google offers Distributed Cloud Edge, which allows organizations to deploy Google-managed infrastructure in edge locations while maintaining integration with Google Cloud services.

Together, these developments highlight a clear trend: hyperscalers are redesigning cloud architectures to enable compute resources to operate across a distributed continuum, thereby supporting new classes of latency-sensitive and data-intensive applications.

Strategic Moves Reshaping the Cloud-Edge Ecosystem

Hyperscalers are translating edge strategy into concrete infrastructure deployments and partnerships. Rather than building entirely new networks from scratch, they are extending cloud capabilities through telecom operators, metro data centers, and distributed infrastructure platforms. These moves are rapidly reshaping where cloud services can operate and how applications are delivered.

One of the most visible examples is AWS Wavelength, developed by Amazon Web Services in collaboration with telecom operators. Wavelength places AWS compute and storage services directly inside 5G networks, allowing developers to build applications that require extremely low latency for use cases such as cloud gaming, autonomous systems, and real-time video processing.

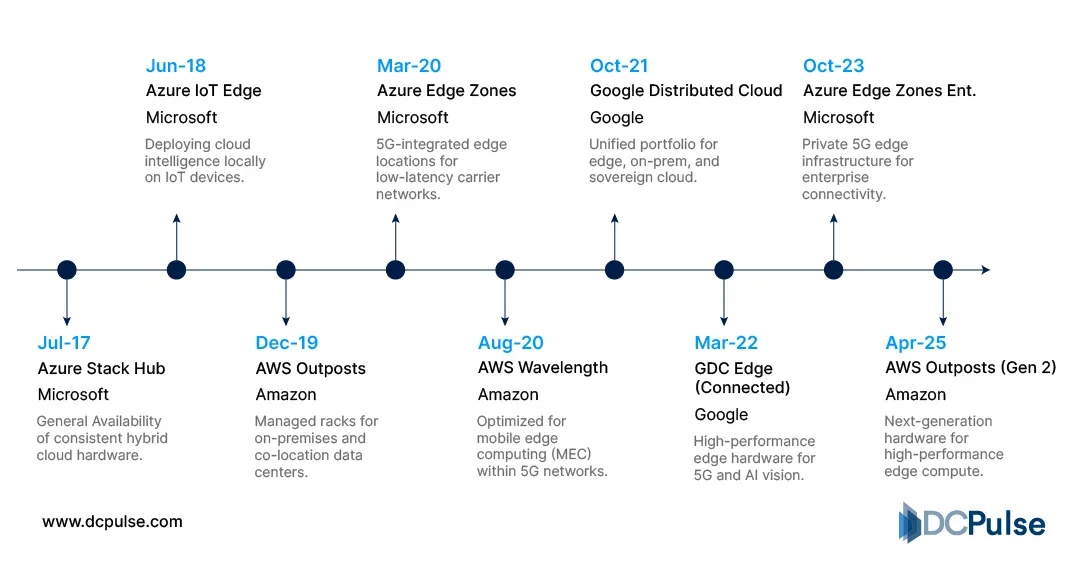

Key Hyperscaler Edge Platform Milestones

Similarly, Microsoft has expanded its Azure Edge Zones, which enable Azure services to run in telecom networks and metro locations while remaining integrated with the broader Azure cloud environment. These deployments help enterprises run latency-sensitive applications closer to users without losing access to centralized cloud services.

Meanwhile, Google is advancing its Distributed Cloud Edge platform, enabling organizations to deploy Google-managed infrastructure in edge locations and connect it to Google Cloud services. This approach supports industries that require local processing capabilities while maintaining cloud-scale management.

Together, these initiatives demonstrate how hyperscalers are expanding cloud architectures into telecom networks and metro environments, gradually dissolving the traditional boundary between centralized cloud regions and the edge.

Where Does Cloud End and the Edge Begin?

The shift toward distributed cloud is no longer theoretical. What began as an attempt to reduce latency for mobile and IoT workloads is evolving into a fundamental redesign of cloud geography. Hyperscalers are no longer treating the edge as an extension of centralized infrastructure; instead, they are building programmable, interconnected compute fabrics that span core regions, metro edge nodes, and telecom networks.

Companies like Amazon Web Services, Microsoft, and Google are investing heavily in this distributed architecture because enterprise workloads are changing. AI inference, industrial automation, autonomous systems, and immersive applications require near-real-time processing that traditional centralized regions cannot always provide. As a result, cloud providers are moving infrastructure closer to where data is generated.

Industry analysts such as Gartner and IDC increasingly describe this transition as the rise of distributed cloud and edge-native platforms, where application logic dynamically moves between locations depending on performance, cost, and data sovereignty requirements.

For enterprises, the strategic takeaway is clear: cloud architecture planning must now include edge placement strategy. The competitive advantage will not come from simply adopting cloud services but from optimizing where workloads run across a distributed cloud-edge continuum. Organizations that design for this model early will be better positioned to support next-generation digital services, while those that remain region-centric may struggle to meet emerging performance and latency demands.