For years, data center growth was constrained by land, power, and cooling. Silicon was assumed to scale in parallel and be predictable, incremental, and reliable. The global chip shortages disrupted that assumption. When advanced processors became scarce, data center expansion slowed, not because operators lacked capital or demand, but because they lacked the chips to deploy capacity.

What began as a pandemic-era supply chain shock quickly exposed deeper structural realities: concentrated fabrication capacity at advanced nodes, long production lead times, and limited high-end packaging availability. As AI workloads surged, demand for high-performance GPUs and accelerators intensified competition for leading-edge wafers, tightening supply further. The constraint was no longer temporary logistics friction; it was manufacturing capacity colliding with exponential compute demand.

For hyperscalers and colocation providers, the implication was profound. Deployment velocity, once modeled around construction and power energization, became dependent on silicon allocation. Infrastructure planning shifted from “How fast can we build?” to “How reliably can we secure compute?”

The long-term impact is clear: chip availability is no longer a background variable. It is becoming a primary determinant of data center scale, timing, and return on capital.

Structural Bottlenecks in the Semiconductor Supply Chain

The widespread chip shortages that have disrupted infrastructure planning are not merely a legacy of supply chain chaos from COVID-19. They reflect deep structural constraints rooted in advanced fabrication concentration, packaging bottlenecks, and explosive demand for high-performance components.

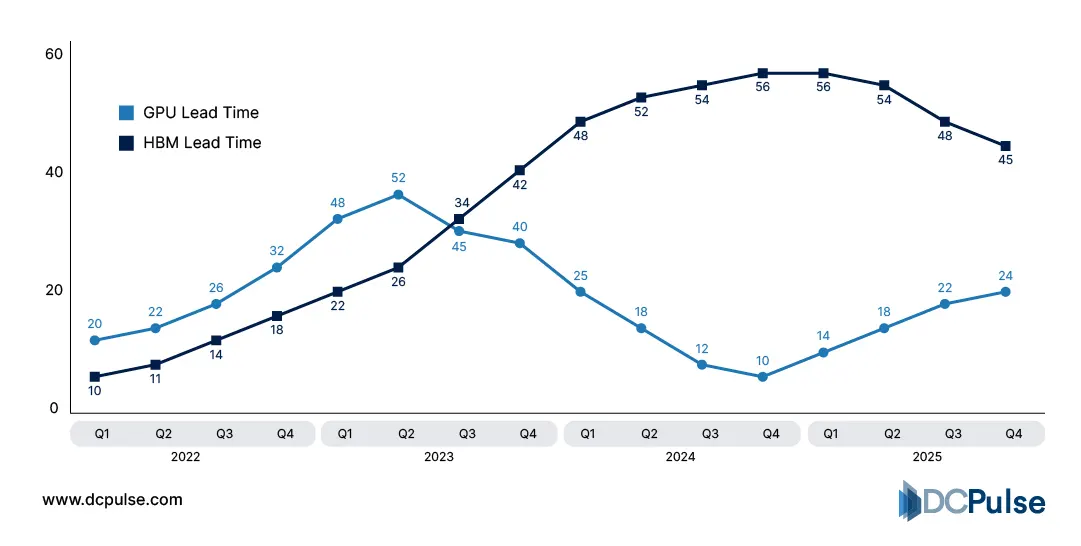

At the center of the shortage are advanced packaging and memory supply constraints. Modern AI accelerators, including NVIDIA’s leading GPUs, rely on High-Bandwidth Memory (HBM) tightly integrated through advanced 2.5D/3D packaging such as chip-on-wafer-on-substrate (CoWoS). However, packaging capacity has not kept pace with demand; industry data shows HBM lead times of 6-12+ months due to tight production and packaging queues. This means even if wafers are ready, many accelerators cannot be completed until packaging slots open.

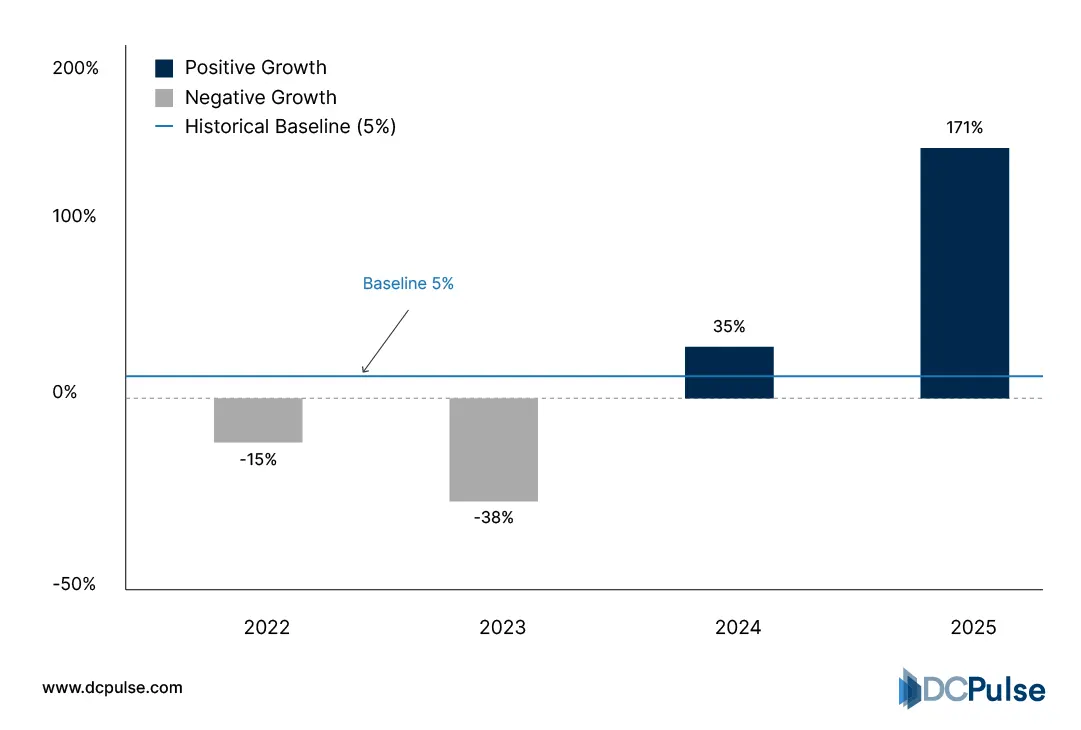

HBM vs. GPU Lead Times (2022–2025)

Advanced fabrication capacity itself remains highly concentrated geographically. Asia-Pacific accounts for over 70% of global foundry capacity, with Taiwan and South Korea dominating leading-edge nodes, while North America and Europe together make up the remainder. This concentration exposes global supply to both geopolitical tension and regional disruptions.

Regional Distribution of Semiconductor Foundry & Packaging Capacity (2025-2026)

![]()

The economics of scale and capital intensity further entrench these bottlenecks. Leading-edge fabs demand tens of billions of dollars in capex for fabrication and specialized equipment like EUV machines, which are limited in number and often have long procurement queues.

These structural realities, advanced packaging constraints, geographic concentration, and capital intensity explain why short-term fixes rarely resolve deep supply issues and why chip availability will continue to shape data center procurement and growth.

Scarcity and the Economics of Scale

The semiconductor shortage has reshaped the economic calculus for data center operators in ways that extend far beyond delayed shipments. As AI and high-performance computing workloads grew explosively, demand for critical components like GPUs, DRAM, and advanced packaging outpaced supply, and the ripple effects are now influencing procurement, pricing, and ROI models.

One immediate economic consequence has been extended lead times and premium pricing for core compute components. GPU lead times for data center configurations have stretched into the 30-40+ week range, forcing operators to adjust deployment schedules and price expectations. This constrains capacity roll-outs and shifts workload prioritization toward available silicon allocations rather than planned performance tiers.

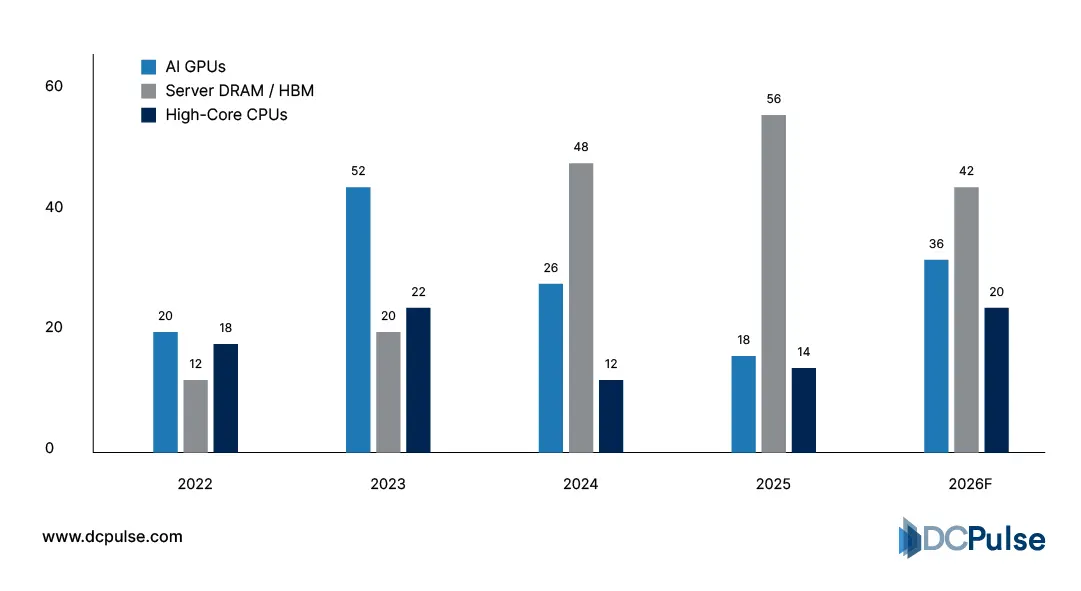

Component Lead Times - GPUs, DRAM, CPUs (2022–2026)

Memory constraints are equally acute. Analysts report that data centers will consume up to 70% of global DRAM supply in 2026, with prices for high-end memory rising sharply as a result. These dynamic pushes push operators to allocate more capital to memory and away from other infrastructure costs, compressing near-term ROI.

DRAM Price YoY Increases vs. Historical Averages (2022–2026)

Broader macroeconomic forces also reinforce scarcity economics. Extended semiconductor lead times, often exceeding 24 weeks for some components, mean that hardware ordered today won’t arrive until well into 2026, locking in cost assumptions that may further inflate the total cost of ownership and delay revenue recognition.

Together, these shortages have altered longstanding assumptions about data center economics: procurement timing, inventory strategy, and capex allocation are now as consequential to ROI as power pricing and real estate.

Strategic Responses to Scarcity: Custom Silicon, Foundries, and Packaging Expansion

The semiconductor supply imbalance has sparked strategic adaptation across the data center and semiconductor ecosystem. Hyperscalers are responding by co-designing custom silicon and securing long-term allocations, while foundries and governments are investing in capacity expansion and supply-chain resilience.

Leading cloud operators have accelerated moves toward in-house and custom AI silicon, reducing reliance on third-party chips. According to industry analysis, companies such as Google, Microsoft, Meta, and Amazon are deploying bespoke AI accelerators tailored to their workloads, increasing performance and reducing dependency on external suppliers. This shift reflects a broader strategic pivot away from generic silicon procurement toward “silicon sovereignty," control over the chips that drive their core services.

Proportion of Custom vs. Third-Party Silicon in Hyperscaler AI Fleets (2022-2026)

![]()

Foundries themselves are targeting bottlenecks. Taiwan Semiconductor Manufacturing Company (TSMC) publicly acknowledged that advanced-node capacity remains about three times short of client requirements, underscoring the persistent supply gap even as fabs expand. TSMC plans aggressive capital expenditure and new facilities aimed at easing pressure over the next several years, though meaningful volume will only come online after multi-year lead times.

Addressing downstream bottlenecks, advanced packaging capacity is also expanding. For example, Amkor Technology broke ground on a major advanced packaging campus in Arizona, supported by CHIPS Act funding, an effort to localize packaging and test resources crucial for high-performance accelerators.

These adaptations, vertical silicon integration by hyperscalers, foundry capex ramps, and localized packaging hubs, are reshaping the semiconductor data center value chain, turning scarcity into a catalyst for structural realignment rather than merely a temporary disruption.

Silicon as a Strategic Variable in Data Center Growth

Chip supply constraints are transitioning from episodic disruption to a persistent strategic variable that will shape data center economics and deployment priorities for years. Advanced foundries like TSMC are forecasting extended capacity tightness driven by AI demand, with leading-edge nodes fully utilized and packaging bottlenecks persisting well into 2027. This structural imbalance forces operators to rethink procurement, capex phasing, and workload allocation around silicon availability rather than build schedules alone.

Memory and accelerators are especially acute bottlenecks. Analysts project that data centers could command over 70 % of high-end DRAM supply by 2026, tightening prices and making memory a significant cost driver for infrastructure spend.

The strategic implication is clear: capacity planning must integrate supply-chain constraints as a first-order risk, not a contingency. Operators that secure multi-year allocations, co-invest in custom silicon, and align workload forecasts with lead-time realities will preserve ROI and execution velocity. Meanwhile, laggards risk delayed rollouts, higher unit costs, and compromised competitive positioning.